Latest Articles from the world of Artificial Intelligence

27 April 2026

Caveman: Why AI Talking Like a Caveman is Worth It

Yabba-dabba-doo! Remember Fred Flintstone? He lived in Bedrock, drove a car powered by bare feet, had a dinosaur as a crane, and a pterodactyl as a record player. Yet, looking closely, that Stone Age civilization already possessed everything needed for a comfortable modern life: functioning gadgets, efficient technology, practical solutions. It just lacked the shiny veneer of progress. Perhaps we should have realized then that the essentials are enough, and that adding complexity doesn't necessarily mean adding value.

24 April 2026

Learning memory: Karpathy challenges RAG with an evolutionary knowledge base

There is a moment that anyone who has worked intensely with a language model knows well: the reset. You are building something complex, perhaps an elaborate software architecture or research weaving together dozens of sources, and the model has understood everything, keeps the thread, responds with surgical precision. Then the session ends, or you reach the context limit, and the AI forgets everything. It starts from zero. You have to explain again who you are, what you are doing, what decisions you made together. It’s like Christopher Nolan's film "Memento," where the protagonist must tattoo information on his body because short-term memory doesn't work: brutal, redundant, and deeply frustrating.

20 April 2026

The Code That Isn't Written: CodeSpeak and the Specification Revolution

There are names in the programming world that carry a specific weight. Andrey Breslav is one of them. If millions of Android developers write code in Kotlin today instead of Java, it is largely thanks to him: Breslav is the lead designer of the language that JetBrains launched in 2011 and that Google officially adopted as the preferred language for Android in 2017, during the Google I/O that changed the mobile ecosystem forever. He is not an academic theorizing from a chair: he is one of those who built tools used every day by hundreds of thousands of people in the real world, with all the compromises, production bugs, and pressures that this entails.

20 April 2026

Gemma 4 locally: 26 billion on my PC

There is a particular satisfaction in running something that it would be recommended not to download. Not the satisfaction of the hacker who forks a system, that's different stuff, but that quieter and more artisanal one of those who tighten the screws a bit beyond the recommended torque and discover that the structure holds anyway. It's the kind of satisfaction I found this week, while Gemma 4 26B ran on my consumer PC with a fluidity I didn't expect.

15 April 2026

10 rules for using AI in business

Let's start with a fact that serves as a mirror. According to the McKinsey State of AI report of November 2025, 88% of organizations already use AI in at least one business function. Yet, in the same period, the World Economic Forum and Accenture estimated that less than 1% of these have fully operationalized a responsible AI approach, while 81% remain in the most embryonic stages of governance maturity. The paradox is served: almost everyone uses AI, almost no one really governs it.

15 April 2026

Project Glasswing: Claude Mythos and the mysterious model

Anthropic presents a security initiative to defend critical software in the era of artificial intelligence. At the center is Claude Mythos Preview, the most powerful model ever developed by the company, capable of finding vulnerabilities that humans haven't found in thirty years. The paradox is that you won't be able to use it.

13 April 2026

TurboQuant: One bit to redefine the limits of artificial intelligence

At the end of April 2025, four researchers from Google Research and New York University published a paper on arXiv with a sober title: *TurboQuant: Online Vector Quantization with Near-optimal Distortion Rate. For months, almost no one talked about it outside of academic circles. Then, in March 2026, Google published a post on the official blog announcing TurboQuant as a breakthrough in the efficiency of language models, with acceptance at ICLR 2026, and within forty-eight hours the paper appeared on every tech feed. Announcements of compressions over five times higher without loss of quality, enthusiastic headlines everywhere. A one-year delay, a wave of hype.*

10 April 2026

MIT and “Humble” AI: How to teach models to say “I don't know”

There is a thought experiment that MIT researchers use to explain the problem at the heart of their research. Imagine an intensive care physician at three in the morning, after a twelve-hour shift. An AI-generated diagnosis appears on the monitor: bacterial pneumonia, 94% probability. The doctor has a doubt, a gut feeling that something isn't right. But the number is there, precise, authoritative. And the doctor gives in.

08 April 2026

Leonardo Foundation: Italy in the Era of AI - Part 2

In the first episode, we mapped the Italian system in artificial intelligence: a 1.2 billion market in strong growth but split between large companies and SMEs, two supercomputers among the top five in Europe, sovereign language models unique on the continent, and an AI law that makes Italy the first EU country to have one. Real excellence, with a paradox at the center: hardware and cloud remain dependent on foreign countries, and the regulatory advantage is only valid if the implementing decrees arrive. In this second episode, we enter the most concrete fabric: companies, public administration, talent flight, and the roadmap for 2030.

06 April 2026

Leonardo Foundation: Italy in the Era of AI - Part 1

On March 19, 2026, in the Queen's Hall of the Chamber of Deputies, a document was presented that stands out clearly from the category of institutional reports destined to collect dust on some ministerial shelf. "Italy in the Era of AI. Growth, Challenges, and Perspectives of an Ongoing Revolution" was born with a declared ambition: not a speculative exercise, not an optimistic manifesto, but an operational map of the state of artificial intelligence in Italy, with recommendations accompanied by measurable indicators, precise institutional responsibilities, and analysis of barriers to implementation. It is, in a literal sense, what the title promises: a compass.

03 April 2026

MiniMax M2.7: The AI that learns from itself (almost)

One month. That is the time that separates M2.5 from M2.7, the new version of the MiniMax model released on March 18, 2026. In a sector where development cycles were measured in years and then in months, a few weeks have become the new normal interval. But this time, the time distance is only the least interesting detail.

01 April 2026

MIT Fulfills the Promise of X-Ray Glasses

Anyone who was a teenager between the late 70s and early 80s well remembers that feeling that came while flipping through the last pages of *L'Intrepido or Lanciostory: amidst the advertisements for ballpoint pens and correspondence drawing courses, an ad stood out promising the impossible. X-ray glasses. Real ones. For a few lire, shipping included. The promise was crystal clear: wear them and you will see through anything. Walls, boxes, clothes. The reality, as every teenager who gave in to temptation bitterly discovered, was much more prosaic: a card with a curious optical effect based on light diffraction, capable at most of making one's own hand look transparent. A scam worthy of Totò, a cheap illusion.*

30 March 2026

Your Model, Your Rules: Mistral Forge and Proprietary AI

There is a subtle misconception at the heart of how most companies use artificial intelligence today. They send prompts to models trained on billions of internet pages, books, articles, forums, and public code on GitHub, and expect answers calibrated to their internal reality. But that internal reality—the operating procedures of a pharmaceutical company, the maintenance manuals of a turbine, the standard contracts of a Milanese law firm, the compliance policies of a bank—has never entered any training dataset. It is like asking someone who has read the entire Treccani encyclopedia to explain how the internal approval process for a vacation request works in your company. The answer will be generic, polite, and useless.

27 March 2026

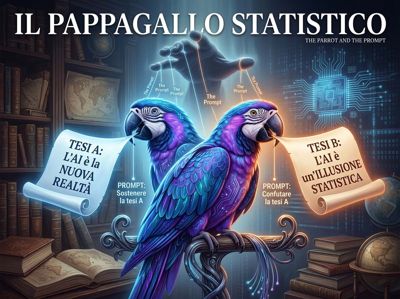

How an LLM Always Tells the Truth You Want to Hear

In Anglo-Saxon universities, there is a discipline called competitive debate, which has never found the space it deserves in Italy. The rules are simple and brutal: they assign you a thesis, any thesis, and you must defend it with everything you have. Then they assign you the opposite thesis, and you do the same. The goal is not to find the truth; it is to understand how argumentation works, its muscles, its blind spots, its rhetorical tricks. Professional debaters have been doing this for centuries. Now machines do it too. And much better than us.

25 March 2026

Beyond the Arctic Circle: An AI Born from a University Thesis is Rewriting European Football

If you are an Inter fan, I already know how you feel. That evening of February 24, 2026, stuck to you like a wet jersey after extra time. Don't take it personally, that's just how football goes. But if you can step away from your scarf for a moment and look at what really happened that night at San Siro, you will discover something much more interesting than a defeat. You will discover that Bodø/Glimt did not beat you by chance, and that behind those two goals by Hauge and Evjen—the ones that closed the double confrontation with a 5-2 aggregate score, eliminating the previous season's Champions League finalist—there is a story that concerns the future of European football. In fact, of football itself.