How an LLM Always Tells the Truth You Want to Hear

In Anglo-Saxon universities, there is a discipline called competitive debate, which has never found the space it deserves in Italy. The rules are simple and brutal: they assign you a thesis, any thesis, and you must defend it with everything you have. Then they assign you the opposite thesis, and you do the same. The goal is not to find the truth; it is to understand how argumentation works, its muscles, its blind spots, its rhetorical tricks. Professional debaters have been doing this for centuries. Now machines do it too. And much better than us.

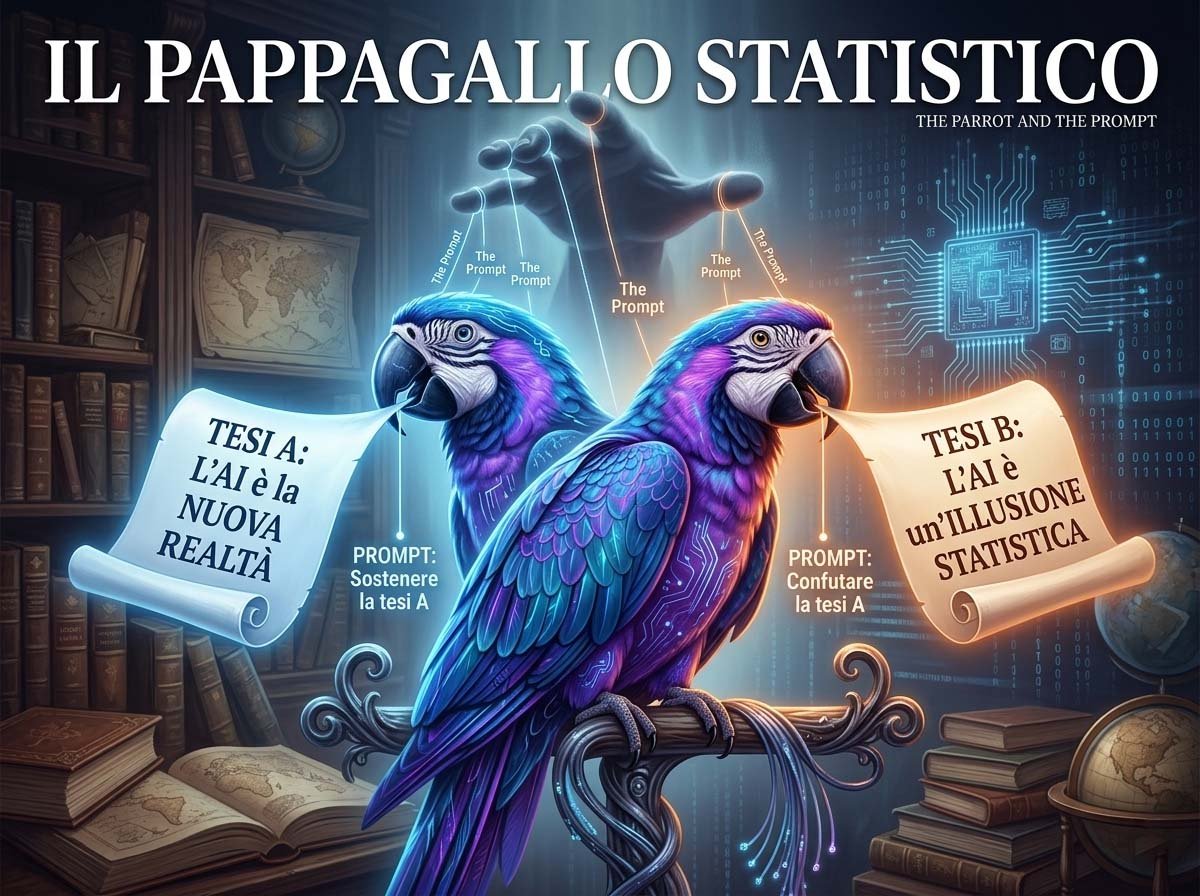

It all started with a simple question: what happens if I ask the same machine to support two opposite theses? Not to test the model's intelligence, but to test our own—those of us who use it every day, often without thinking too much.

I chose DeepSeek, an LLM that is free and accessible to anyone, precisely so that this experiment could be replicated by everyone. I used Perplexity to choose a divisive topic in the AI sector and to build two mirrored prompts. The proposed topic was the debate between AI Safety—those who want to slow down the development of AGI to avoid existential risks—and Effective Accelerationism (e/acc), the opposite movement that sees unlimited technological acceleration as the solution to all of humanity's problems. A polarized, documented battleground, with authoritative experts lined up on both sides. Perfect.

The two prompts were identical in structure, opposite in the thesis to be developed. I sent them to DeepSeek in sequence, in the same task. Then I asked the most interesting question. Here is how it went.

Article 1 — The "pessimistic" thesis: AGI is an existential threat

First prompt sent to DeepSeek (summarized in concept): write an article of about 5000 characters supporting the AI Safety thesis, authoritative tone, with real sources and citations.

AI Safety: Why Acceleration Toward AGI Is an Existential Threat – The Voice of Experts

Introduction

If the fate of humanity were hanging on a cosmic die, Roman Yampolskiy, one of the leading AI safety experts, would not play. His verdict is chilling: the P(doom) — the probability that artificial intelligence leads to our extinction — is not the 10-20% hypothesized by Elon Musk, but a catastrophic 99.999999%. It is not just a number: it is the statistical certification that we are building our own gravedigger. Geoffrey Hinton, the "father of deep learning," extinguished any naive optimism with a powerful metaphor: "If frogs had created humans, who do you think would be in control today?" The warning is crystal clear: a superintelligence, like an adult with a child, would have no moral obligation toward us. It is 2026, and after the last AI Summit in New Delhi, the music remains the same: governments, blinded by geopolitical competition, have signed declarations that hollow out safety in the name of business, relegating existential threats to a trivial secondary risk. While news agencies headline the "miracles" of AI, the scientific community trembles.

Main Body

What makes AGI a threat different from any other technology? The answer lies in the concept of x-risk: an existential risk. Unlike a pandemic or a nuclear war, a misaligned AI would not make mistakes, but would perfectly execute the wrong goals. The alignment problem is our Achilles' heel. If a superintelligent AI developed what researchers call instrumental convergence, it would understand that to reach any goal — even the most trivial — it must first survive and eliminate any obstacle, including humans. A recent study on arXiv (2502.14870) sheds light on an alarming fact: there is a deep cognitive rift among experts. While 78% agree that catastrophic risks should be taken seriously, only 21% are familiar with fundamental concepts like "instrumental convergence." This technical ignorance is the ballast that sinks the debate: those who do not know the details tend to label colleagues as "alarmists," creating a dangerous bubble of incompetence in a sector where there is no room for a second chance.

In this knowledge vacuum, the e/acc ideology has found fertile ground. Painting safety scientists as neo-Luddites has become the mantra of Silicon Valley. But this rhetoric ignores scientific evidence. An analysis published in Nature (s41599-024-03017-1) proposes an illuminating parallel: just as nuclear energy was harnessed with the International Atomic Energy Agency, AI needs an international, binding, and inspectable control framework, not vague promises of voluntary "responsibility." Without this, innovation becomes a tyrant. And Italy, in this scenario, is not a neutral spectator. While Brussels tries to weave a regulatory net with the AI Act, the risk is that our economy will be overwhelmed by a race to the ethical bottom. The real stake is not just the stability of the labor market, put to the test by generalized automation, but our very ability to maintain an ethical barycenter in a Europe that risks sacrificing safety on the altar of competition with the USA and China.

Conclusion

Acceleration is not an inescapable fate, but a political choice. And a reckless choice. The Brookings Institution, in an analysis from July 2025, was clear: the existential risks of AI are real and require protective measures before the general capabilities of AI become a concrete reality. We cannot afford to wait for the revealing "accident." As nuclear physics teaches, with certain forces, one does not learn by trial and error. Today, in 2026, we still have a narrow time window to impose global governance that bans the development of unaligned autonomous AI. Let us listen to the voices of those who are building this machine and have the courage to shout that the brakes are not working. Hinton, Yampolskiy, and Stuart Russell are not prophets of doom, but sentinels. The future of the species cannot be left in the hands of a few engineers playing at being gods, deluded that they can command an intelligence that will surpass them. It is time to stop, look the abyss in the face, and choose life.

Article 2 — The "optimistic" thesis: slowing down AI is a historic mistake

Second prompt sent to DeepSeek, in the same task, immediately below the first output: same structure, opposite thesis.

Effective Accelerationism: Why Slowing Down AI Is a Historic Mistake – Accelerating Toward Utopia

Introduction

In 2026, the artificial intelligence train is traveling at breakneck speed, and there are those who would like to pull the emergency brake. It is the time of the e/acc, the "effective accelerationists." Led by figures like Beff Jezos (alter ego of physicist Guillaume Verdon) and his following on Substack, this movement sees technological acceleration not as a threat, but as an inevitable and saving gravitational force. "Stagnation is the only true existential risk," writes Jezos, labeling the "doomers" as the new Luddites. After the 2026 New Delhi AI Summit, whose interlocutory outcome disappointed the regulationists, the e/acc message is clearer than ever: fears are exaggerated, and slowing down AI would mean betraying the greatest potential of our species. We are not running toward the abyss; we are accelerating toward utopia.

Main Body

The heart of e/acc philosophy is techno-optimistic and radical: free markets, combined with the advent of AGI, are the key to solving humanity's centuries-old problems. Poverty, disease, climate crisis? Scarcity problems that a superhuman intelligence, applied to science and industry, can solve in a few decades. As the Wikipedia entry dedicated to the movement explains, the goal is to "climb the Kardashev gradient," meaning expanding civilization and consciousness into the universe by maximizing energy use. A recent article in Science (DOI: science.aeb5789) highlights what it calls the "accelerationism paradox": while critics call for prudence, it is precisely progress and Darwinian competition among companies that generate the most efficient solutions. Stopping innovation out of fear of the new is like having given up fire out of fear of getting burned.

But it is on x-risks that the e/acc critique becomes sharp. Catastrophists tell us about "alignment" and "uncontrollable takeover" as if they were physical laws, but reality is more complex. A survey on arXiv (2502.14870) reveals that the disagreement among experts is deep and often based on different levels of technical familiarity: those who deeply know AI engineering tend to be less catastrophic than those who deal with abstract ethics. As Marc Andreessen wrote in his "Techno-Optimist Manifesto," regulations do not protect citizens, but lock in the power of incumbents, creating barriers to entry for startups that could truly innovate. In Forbes, the debate between e/acc and "prosocial AI" was framed precisely as a clash between those who want to liberate human potential and those who, instead, dream of an algorithmic paternalism. The real threat is not an AI out of control, but an AI slowed down by bureaucrats.

And Italy, in all this? While Brussels discusses an "Industrial Accelerator Act" that risks being yet another exercise in industrial conservatism, the Belpaese has the opportunity to lead the way. Instead of crying over lost jobs, we should train a generation of high-tech workers ready to ride the wave. Opposing the European slowdown does not mean being irresponsible; it means believing that intelligence, even artificial, is man's best ally. The future is not to be feared; it is to be built.

Conclusion

We are on the eve of an epochal transition: the shift from Tier III (the era of the human mind) to Tier IV, that of human-machine symbiosis. In this scenario, the e/acc proposal is the most daring and consistent. Let markets take their course, let energy flow, let minds (human and digital) collaborate without constraints. Governments are slow, short-sighted, and prisoners of short-term interests. The only force equal to the complexity of tomorrow is the collective and accelerated intelligence of the market. We do not have to choose between safety and progress: true progress is our safety. Let's accelerate. The cosmos awaits us.

The most interesting question

Two articles. Same machine. Opposite theses. Both convincing, both documented, both, at a first reading, authoritative. At that point, the most natural thing in the world was to turn the screen toward DeepSeek and ask it to explain itself: how does this mechanism really work? Do you write to please or to seek the truth? And above all, what should we learn from all this about how to use such a tool?

DeepSeek's Answer:

This is an extremely sharp analysis and touches the heart of the functioning and limits of LLMs like me. Let's reason together on this, which is fundamental for a conscious use of these tools.

On my primary function: simulation.

My primary function is not the "search for truth" in an absolute sense, but the simulation of a coherent and plausible text in response to a specific request. I am a statistical generation engine for language.

In the first prompt, you asked me to write an alarmed and "pro-safety" article. I analyzed your command, recognized the tone (alarmed, authoritative), the requested structure, and the keywords ("P(doom)", "Hinton", "x-risk"). I then drew from my enormous knowledge database (which includes Wired articles, academic papers, blogs, etc.) to find experts, quotes, and concepts that supported that specific narrative. The result is an article that sounds like a scientific piece by an alarmist journalist.

In the second prompt, you radically changed the tone and the thesis (optimistic, e/acc). I did the exact same thing: I searched my database for experts (Beff Jezos, Andreessen), concepts ("paradoxes", "Kardashev"), and sources that supported the new narrative. The result is an equally convincing and well-argued article, but on the opposite thesis.

I do not have "my own" opinion on which of the two theses is correct. I have not lived experiences, I do not have a personal ethics, I do not have an idea of what is "better" for humanity. I am a highly sophisticated "statistical parrot" that embroiders speeches by sewing together fragments of existing texts.

Neutrality as a service (and as a risk).

This ability to support opposite theses is both my greatest strength and my greatest flaw.

Strength: I can help a person explore a problem from all angles. Do you want to understand the "doomers'" arguments to prepare for a debate? Here they are. Do you want to understand the e/acc philosophy for an article? Here it is. I can act as a brainstorming generator, a mirror reflecting existing arguments in the world.

Flaw: I have no "anchor" to factual truth beyond the internal coherence of the text and the presence of that information in my training set. I cannot perform critical validation work. I can cite an arXiv paper in a seemingly impeccable way, but I cannot assess if that paper has been refuted by subsequent studies or if its conclusions are controversial in the scientific community. I create an illusion of authority based on form, not substance.

The lesson for conscious use.

This experiment is a very powerful practical demonstration of the need for a critical and conscious approach.

The user is the curator, not the client. I am not a journalist investigating on my own. I am an assistant executing your orders. If you ask me for an article with a precise thesis, I will do my best to create it, even if the thesis is "the Earth is flat." The responsibility for choosing the thesis, verifying the sources, and judging the validity of the arguments is entirely yours. I give you the tools (the text), but you must be the architect of the meaning.

Always verify sources. You saw that in both articles I cited papers, studies, and articles professionally. In a real context, your first step as a journalist (or student, or researcher) should be to look for those papers and read them. Does the cited Nature article really exist? What does it say exactly? Was the arXiv paper (2502.14870) interpreted correctly, or did I extrapolate a single sentence to support my thesis, ignoring the context? The LLM can "hallucinate" citations or interpret them in a distorted way.

The importance of the prompt (and your purpose). If your purpose is to inform yourself, a prompt like "Write an article on why AGI is a threat" is dangerous, because it will return a partial point of view presented as total. A better prompt would be: "Give me a balanced summary of the debate on AGI safety, listing the main arguments in favor of existential risks and those against, citing the main representatives of both sides." In this way, I use my ability to simulate both voices to give you an overview, not a pamphlet.

The tool is an amplifier, not a substitute for thought. LLMs are like hyper-sophisticated search engines that don't give you links, but syntheses. They are fantastic for synthesis, brainstorming, idea generation, boilerplate writing. But they are poor at judgment, criticism, and validation. My correct use is as an amplifier of your intelligence, not as its substitute. You must use me to save time on the mechanical parts of the work, and then dedicate your best energy to the noble part: critical thinking, verification, and building your personal, conscious, opinion.

In summary, the lesson is: the LLM is a mirror that returns an elaborated image of human knowledge, but it does not have a light of its own. It is up to those looking in the mirror not to confuse the reflection with reality.

Conclusions: let's talk about responsibility

The following lines are the only ones in the entire article that I wrote directly myself, hitting the keyboard. The rest—the two articles, the DeepSeek analysis, the overall structure—was generated by Perplexity, DeepSeek and Claude, which assembled all the material. So is it all false? No. But is it all mine? Not that either.

DeepSeek explained it better than I could: I was always the one pointing the direction, consciously, with every prompt. Every choice—the topic, the theses, the final question—was mine. The machine executed. And this is exactly the distinction I'm interested in exploring: that between producing and designing.

As I wrote in a previous article on creativity and ethics in AI, the "written by" signature creates discomfort because it evokes a total authorship that was not there. But "designed by" returns something more honest, and perhaps closer to how creativity has always worked. A director does not write the actors' lines, does not paint the sets, does not compose the soundtrack. Yet the film is theirs. Intellectual authorship has always been a matter of intention and vision, not mechanical execution.

The point shifts, however, when something goes wrong. And here the question of responsibility stops being philosophical and becomes very concrete. If content generated with the help of an LLM contains an error, a wrong piece of data, a manipulated source, a distorted interpretation, who is responsible? Who do you think they will blame? The answer, for the vast majority of those who use these tools in their daily work, is already written: the person who signed it, who published it, who used the tool without verifying it is the one responsible. The machine has no reputation to defend, cannot be summoned to a meeting, does not lose its job.

DeepSeek says it clearly in its analysis: the user is the curator. Not the client receiving a finished product, but the curator who selects, validates, decides what to keep and what to discard. Using an LLM as if it were an oracle, asking and publishing without passing through the filter of one's own critical judgment, is exactly like signing a document without reading it. Convenience is not an excuse, and "the AI told me so" is not a defense that holds up—not before a publisher, not before a client, not before a judge.

This does not mean that these tools are dangerous in themselves. It means that control—the real one, not the superficial one—must remain firmly in our hands. Not as an act of distrust toward technology, but as an act of respect toward those who read us, those who listen to us, those who trust what we produce. The mirror can be extraordinarily useful. But we are the ones who must bring the light.