Caveman: Why AI Talking Like a Caveman is Worth It

Yabba-dabba-doo! Remember Fred Flintstone? He lived in Bedrock, drove a car powered by bare feet, had a dinosaur as a crane, and a pterodactyl as a record player. Yet, looking closely, that Stone Age civilization already possessed everything needed for a comfortable modern life: functioning gadgets, efficient technology, practical solutions. It just lacked the shiny veneer of progress. Perhaps we should have realized then that the essentials are enough, and that adding complexity doesn't necessarily mean adding value.

Julius Brussee, a Dutch developer, seems to have re-read the lesson with new eyes. His project Caveman, available on GitHub under the MIT license, does only one thing: it forces the model to respond like a caveman. No articles, no pleasantries, no warm-up sentences. Just the technical core, expressed in the most direct way possible.

The problem Caveman seeks to solve is real, and anyone working with paid language models knows it well. Every response from an LLM has a cost measured in tokens, the elementary units into which the model breaks down and recomposes language, something between a syllable and a word. Via API, output tokens are generally more expensive than input tokens, and modern models tend to be generous with words: they introduce every response with courtesy formulas, state the obvious, repeat already known context, and conclude with a summary of what they just said. It is systemic verbosity, trained for years on human feedback that often rewarded apparent completeness over real conciseness.

Let's take a concrete example, the same one reported in the official project documentation. Claude's standard response to a re-rendering problem in React sounds something like this: "The reason your React component is re-rendering is likely because you're creating a new object reference on each render cycle. When you pass an inline object as a prop, React's shallow comparison sees it as a different object every time, which triggers a re-render. I'd recommend using useMemo to memoize the object." Sixty-nine tokens. In Caveman mode, the same information becomes: "New object ref each render. Inline object prop = new ref = re-render. Wrap in `useMemo`." Nineteen tokens. Same diagnosis, same solution, three and a half times fewer words.

How it works

Technically, Caveman is a .md file to be installed in the environment of the chosen agent with a single command.

Once installed, the tool is activated on request via the /caveman or $caveman command on Codex, and the phrase "talk like caveman", "caveman mode", or simply "less tokens please", and is deactivated with "stop caveman" or "normal mode". These are a set of contextual instructions that modify the model's behavior within a session, without retraining it or modifying its internal parameters.

This is accompanied by a second tool, /caveman:compress, which acts on the opposite side: instead of compressing the model's responses, it compresses the context files—for example, Claude loads it at every session, typically CLAUDE.md and project note files. The command rewrites these files in caveman style and automatically keeps a readable copy as a backup (CLAUDE.original.md). Everything technical—code blocks, URLs, file paths, commands, headers, dates, version numbers—remains unchanged; only the descriptive prose is compressed. The project's benchmarks on five types of real files show an average reduction of 46% of input tokens per session, with a peak of 59.6% on preferences files.

The heart of the mechanism is a set of grammatical and stylistic constraints that a user comment on Hackaday summarized precisely: definite and indefinite articles are eliminated, linguistic fillers (just, really, basically, actually) are suppressed, pleasantries (sure, certainly, of course, happy to) are deleted, short synonyms are favored (big instead of extensive, fix instead of implement a solution for), and the use of hesitation forms (it might be worth considering) is prohibited. Sentence fragments are acceptable. The subject is not needed if it is implicit. Technical terminology, however, remains intact: polymorphism stays polymorphism, code blocks are written normally, and error messages are cited verbatim. As the project's README says with self-irony: "Caveman not dumb. Caveman efficient."

This distinction is fundamental to understanding what Caveman actually does. It is not a compressor that cuts off responses in the middle, nor a tool that simplifies concepts to make them more digestible. It is a filter that eliminates communicative surplus—those sentences that models produce not because they contain useful information, but because their training, based on human evaluations that tend to reward elaborate and polite responses, pushed them in that direction. Caveman acts like a strict editor who deletes everything that doesn't add technical value, leaving on the page only what is needed.

The project has meanwhile added granularity that makes it more adaptable than the caveman premise might suggest. Three intensity levels are available, selectable with dedicated triggers: /caveman lite keeps grammar intact and only eliminates fillers, returning a professional but dry output, suitable for contexts where form still matters; /caveman full is the default mode, the one that removes articles, accepts sentence fragments, and aims for the technical core without mediation; /caveman ultra pushes compression to the maximum, with a telegraphic style that abbreviates everything compressible.

The selected level persists for the entire session until an explicit change. But the most unexpected surprise is another: the project README also documents a mode in literary classical Chinese, wenyan (文言文), the written language used in China for over two thousand years until the 20th century, notorious for a semantic density that has no equivalent in modern languages. The idea is not folkloric: wenyan is objectively one of the most token-efficient writing systems ever invented by humanity, and Brussee integrated it with the same progressive logic as the Latin levels—from /caveman wenyan-lite, which maintains grammar but eliminates the superfluous, to /caveman wenyan-ultra, which the README describes with some irony as the mode for the ancient scholar short on budget. Of course, with wenyan, the savings are extreme, but then you have to understand what it writes...

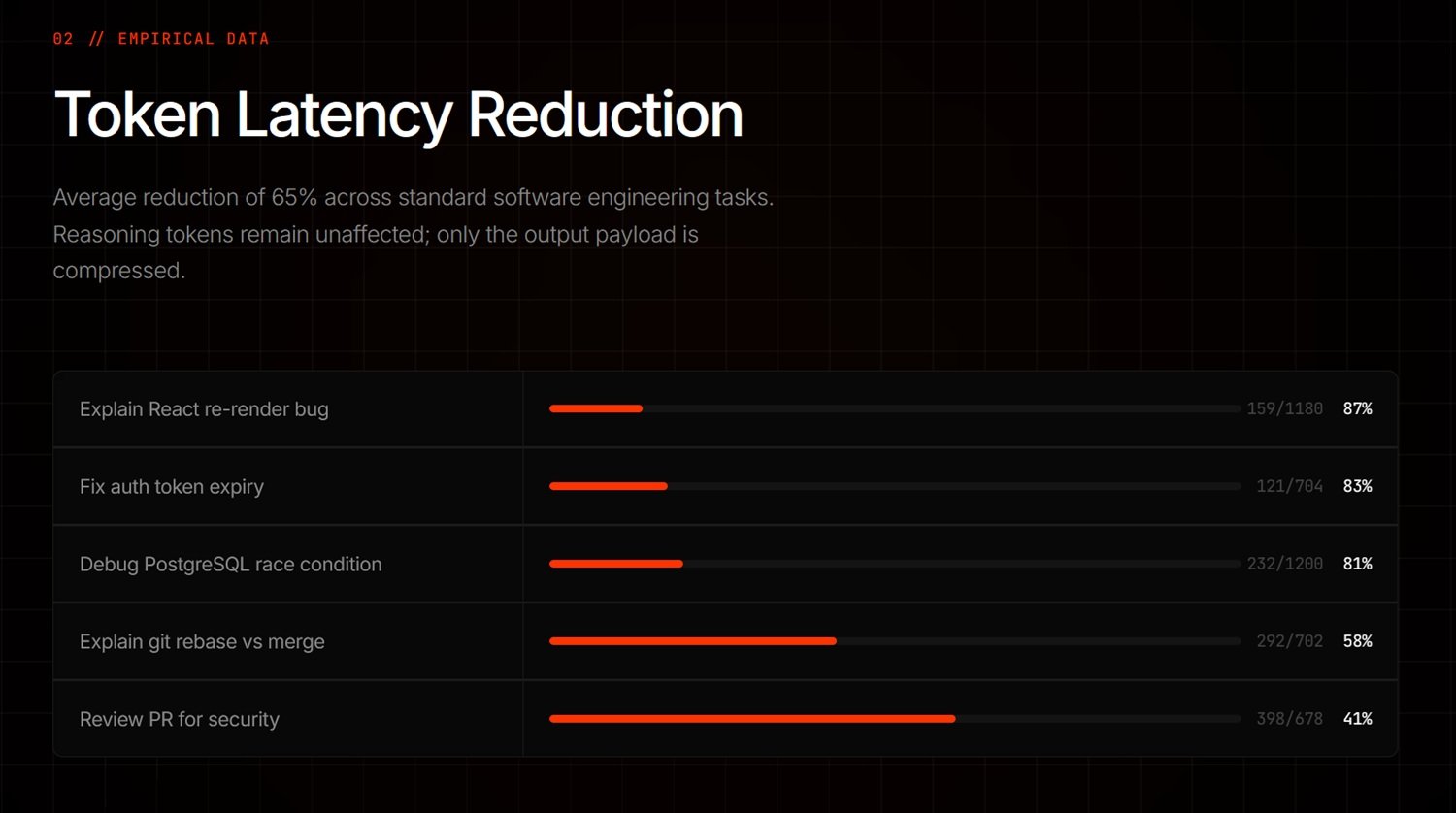

Benchmark data reported on the project page indicate variable reductions depending on the type of task: from 87% on React re-rendering problems to 83% on authentication middleware debugging, 81% on PostgreSQL race conditions, down to 58% for git rebase vs merge explanations and 41% for security reviews on pull requests. The declared average settles around a 65% reduction in output tokens. It is important to note that these numbers exclusively concern output tokens, not the entire session: input tokens—those of the context, system instructions, and tool calls—remain unchanged and can represent a significant portion of the total cost, especially in long sessions or with many calls to external tools.

The Science of Silence

So far, one could dismiss Caveman as a nice trick, one of those micro-projects that circulate on GitHub for a few days and then disappear. What makes it interesting from a broader perspective is its connection to an emerging scientific literature studying the relationship between brevity and language model performance, and the results of that literature are, to say the least, counterintuitive.

The reference paper is "Brevity Constraints Reverse Performance Hierarchies in Language Models", published on March 11, 2026, by MD Azizul Hakim on arXiv. The study starts from an anomalous observation: on a subset equal to 7.7% of the problems evaluated, distributed across five different datasets, larger language models perform worse than smaller models by as much as 28.4 percentage points, despite having 10 to 100 times more parameters.

The mechanism identified as the cause is what the paper calls scale-dependent verbosity, a prolixity that grows as model size increases. Larger models tend to over-process responses, adding reasoning steps, qualifications, and digressions that do not improve the final answer—and often worsen it by introducing errors through over-processing. Forcing large models to respond concisely improves their accuracy by 26.3 percentage points, reducing the gap with small models by 67%, while small models, subjected to the same constraint, suffer a drop of only 3.1 percentage points.

The most surprising result concerns specific benchmarks. On GSM8K, the mathematical reasoning dataset, and on MMLU-STEM, the scientific knowledge one, brevity constraints completely reverse the performance hierarchy: large models, which in normal conditions performed worse than small ones, end up surpassing them by 7.7-15.9 percentage points. In other words, the superior capability was already there but was masked by the tendency to "think out loud" excessively. Brevity does not compress reasoning; it frees it from the verbose shell that suffocates it.

However, a note of caution must be registered, which the study itself honestly introduces: on BoolQ, the dataset requiring integration of information distributed across multiple sentences, brevity constraints slightly increase the gap instead of reducing it, from 23.5% to 24.3%. On this type of problem, extended processing is functional, not excessive: cutting it short worsens the result. It is not a universal result but context-dependent, and this nuance is crucial to not misunderstand what the paper demonstrates.

The hypothesis proposed by the authors to explain the tendency toward verbosity in large models is that the alignment process via human feedback (RLHF) inadvertently trained these models to over-process, as human evaluators tend to reward long and apparently complete answers, creating a systematic bias that scales with model size. It is a plausible explanation consistent with other known phenomena in model training, although it remains an interpretative hypothesis to be further verified.

The Mammoth in the Room

Data in hand, Caveman works, at least in certain contexts. The declared savings are real and measurable, the scientific basis exists and is not trivial, and the technical mechanism is transparent and verifiable by anyone. But an honest analysis requires putting the reservations on the table too, and there are several.

The first reservation concerns the quality of responses on complex tasks. Is a shorter answer always a better answer? Hakim's paper explicitly says no for certain types of problems, and the intuition is verifiable in daily practice. When working on an obscure bug, on an architectural problem with many variables, or on poorly documented legacy code, a response that explains the reasoning step by step has a value that goes beyond the amount of information strictly necessary: it helps to understand, to verify, to build a mental model. Cutting that explanation in the name of efficiency can force more iterations, more clarification questions, and more lost time, nullifying the initial saving.

This leads to the second reservation, regarding the differentiated user experience. For a senior developer who uses an agent as an accelerator, who knows the code they work on and needs quick confirmation rather than explanations, Caveman is probably a net gain. For a junior who also uses the assistant to learn, for someone facing a new technical domain, or for those using agents in contexts that go beyond pure coding—such as technical writing, security analysis, or support for architectural decisions—excessive synthesis can increase the cognitive load and generate more confusion than clarity.

Finally, it is worth remembering that the available scientific evidence supports the principle of brevity as a useful constraint, but does not specifically validate Caveman as an implementation. Hakim's paper was published on arXiv, still in the pre-print stage at the time of writing this article, and has not yet received a formal review from the academic community. Brussee's tool attracted the attention of the tech community with 33.5k stars on GitHub in a few days, and this cannot be underestimated, but it has not been subject to an independent and systematic evaluation by third parties. These are signs of genuine interest, not certified validation.

Keystone or Meteor?

The most interesting question Caveman poses is not technical; it is methodological. If the most powerful language models structurally tend to respond more verbosely, and if that verbosity is often harmful both for costs and, surprisingly, for the quality of responses on certain types of problems, then the problem is not Caveman's: it belongs to the way these models are trained and evaluated.

Hakim's paper proposes an approach called problem-aware routing: using small models for tasks where they naturally excel or where brevity constraints do not help large models, and using large models with brevity constraints for tasks where they possess superior latent capabilities but are prone to over-processing. This approach, the authors say, can simultaneously improve accuracy and costs. It's not science fiction: it's a form of prompt engineering aware of model scale, an idea that could become common practice in professional agentic workflows.

In this sense, Caveman is not simply a trick to save a few cents on the API. It is a practical demonstration of a principle that research is beginning to formalize: that system instructions are not decorative, that the way a model is queried changes not only the form but the quality of the response, and that optimizing the human-machine interface has measurable consequences on the performance of the overall system.

Legitimate questions remain open. Does the pattern really transfer to all types of tasks or only to structured coding ones? How is the net ROI measured when the calculation includes the cost of additional iterations that an overly summary response can generate? How much does the user's familiarity with the technical domain affect whether brevity is an advantage or a disadvantage? And, returning to the big underlying question: if brevity improves performance, why aren't models natively trained to respond more concisely?

The latter is perhaps the most important, and the answer implicit in Hakim's paper is uncomfortable: because the human feedback on which the alignment of these models is based often prefers length over precision, courtesy over information density. It is we—as evaluators, as users, as clients—who have trained the models for verbosity. Caveman is, after all, a handcrafted corrective to a systemic problem that arises much further upstream.

Fred Flintstone, probably, had already understood everything. Small cave, essential tools, big result. The rest is just extra stone to drag along.