MiniMax M2.7: The AI that learns from itself (almost)

One month. That is the time that separates M2.5 from M2.7, the new version of the MiniMax model released on March 18, 2026. In a sector where development cycles were measured in years and then in months, a few weeks have become the new normal interval. But this time, the time distance is only the least interesting detail.

Just a month ago, in Codemotion magazine, I analyzed MiniMax M2.5: a model with a mixed-experts architecture of 230 billion total parameters, capable of activating only 10 billion per query, with performance on SWE-Bench Verified (80.2%) practically identical to Claude Opus 4.6 (80.8%) at one-twentieth the price. The proposal was as simple as it was unsettling: frontier quality at affordable costs, with an "architect mentality" that emerged spontaneously during training on over 200,000 real environments. MiniMax, a Shanghai startup listed in Hong Kong with a post-IPO capitalization of around 13 billion dollars, had already redesigned the quality-price ratio in AI for code.

M2.7 is not a response to a weakness in M2.5. It is something else: a declared attempt to change the way a model is developed, not just what it produces. The keyword MiniMax has chosen to communicate this is self-evolution. It is a fascinating, evocative, and potentially misleading word. It is worth understanding exactly what it means, and above all what it does not mean.

The model that helps build the next model

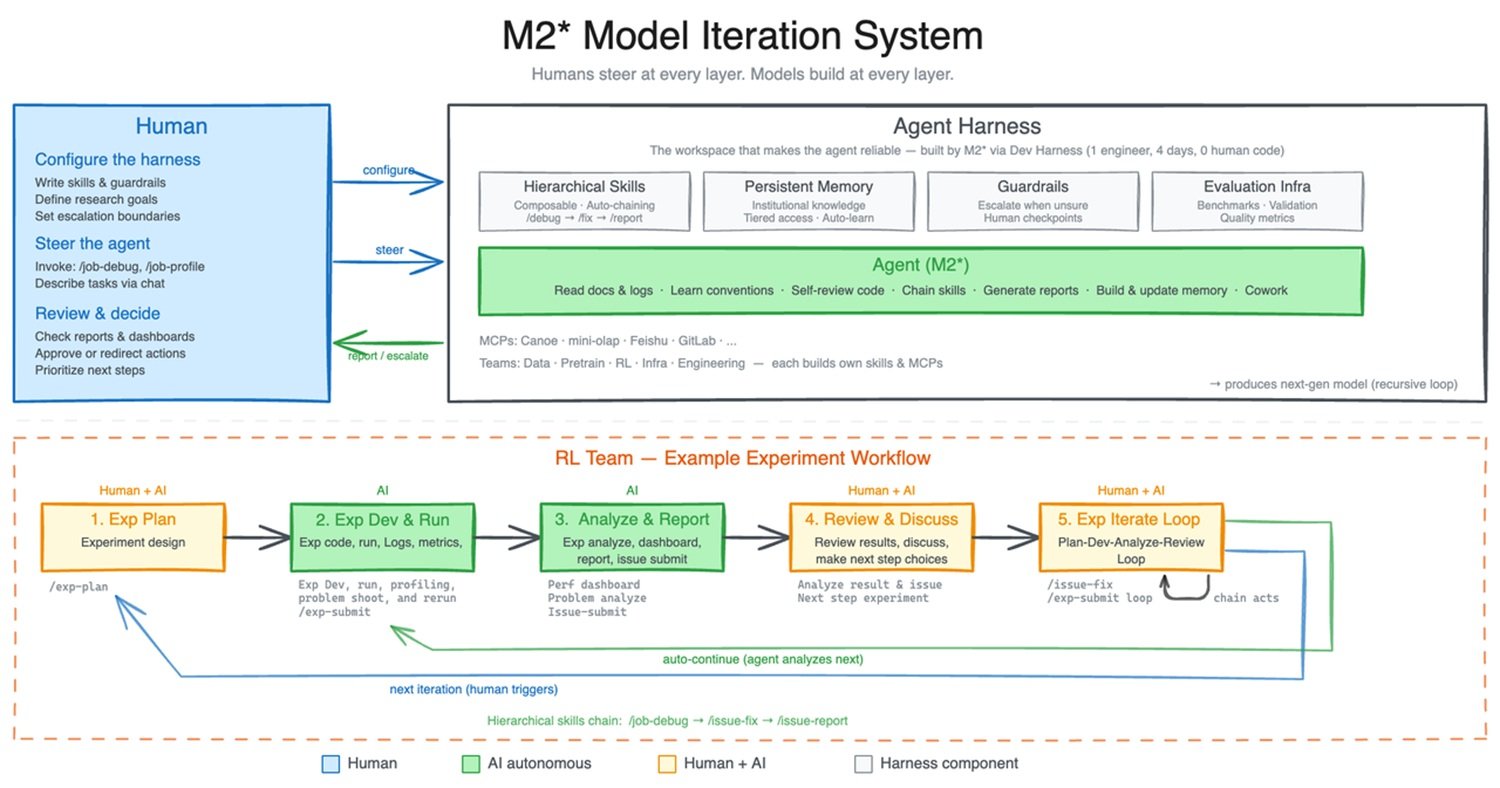

To understand what really happened with M2.7, we need to start from a concrete image. In the MiniMax research team, researchers working on reinforcement learning (training through rewards and penalties) manage long and complex experimental cycles: ideas to test, data to prepare, experiments to launch, results to analyze, code to fix, new experiments to configure. It is work that normally requires collaboration between several people from different teams.

According to official MiniMax documentation, an internal version of M2.7 was put to work on exactly this cycle: the model helped in literature review, prepared data pipelines, launched experiments, monitored logs, debugged errors, analyzed metrics, opened merge requests, performed smoke tests. Human researchers intervened only for critical decisions and strategic discussions. In this context, MiniMax estimates that M2.7 managed to autonomously handle 30-50% of the RL team's workflow.

But there is a second, more radical level. MiniMax asked M2.7 to autonomously optimize the performance of a model on an internal programming scaffold. The process was entirely automated: the system executed an iterative cycle of analyzing failure trajectories, planning changes, altering the scaffold code, executing evaluations, comparing results, deciding to keep or cancel changes. This cycle repeated for over 100 consecutive iterations. The result declared by MiniMax is a 30% improvement in performance on internal evaluation sets. The optimizations found autonomously by the model included the systematic search for the optimal combination of sampling parameters such as temperature, frequency penalty, and presence penalty, the design of more specific workflow guidelines (for example, automatically searching for similar bug patterns in other files after a fix), and the addition of loop detection mechanisms within the agentic cycle.

This is the technical core of M2.7's self-evolution. It is not a model that rewrites itself from scratch, it is not artificial consciousness, it is not an entity that autonomously decides where to improve. It is an agent that optimizes a predefined objective function, in a controlled environment, on a specific problem, with rules established by human engineers. The difference compared to the past is the speed and scale of this optimization: hundreds of cycles in a few hours, whereas a human team would have taken weeks.

It is also worth taking a step back and noting that MiniMax is not the first organization to explore this territory. In May 2025, Google DeepMind presented AlphaEvolve, an evolutionary agent based on Gemini that used an automated cycle of generation, evaluation, and selection of algorithms. AlphaEvolve had improved the efficiency of Google's data centers (recovering on average 0.7% of global computational resources), accelerated Gemini's own training by 23% on a critical kernel, and even solved an open problem in the matrix multiplication algorithm that had remained unchanged since the Strassen era in 1969. The approach was, however, fundamentally different: AlphaEvolve focused on optimizing specific algorithms, evaluable with objective metrics. M2.7 attempts something broader, applying the logic of self-improvement to an entire ML research cycle, with tools, persistent memory, and collaboration between agents. The families of approaches are related, not identical.

Self-evolution: How much is really true?

The public narrative around M2.7 quickly gravitated toward the "AI that evolves on its own" frame, with videos accumulating millions of views and posts painting sci-fi scenarios. It is the right time to get our feet on the ground.

The percentage of self-optimization that MiniMax cites, that 30-50% of the workflow managed autonomously in the context of the RL team, refers to a specific and controlled context: an internal team, with predefined tools, clear objectives, specifically designed infrastructure. It is not a number that automatically transfers to other contexts. The remaining 50-70% of the work remained in the hands of human researchers, who made strategic decisions, evaluated the direction of experiments, and above all defined what "improvement" meant.

A critical point that MiniMax's communication deliberately leaves in the shadows is the control architecture. What actions can the agent execute autonomously? Can it modify production code without human approval? Can it launch experiments with arbitrary computational costs? How are harmful loops, silent regressions, or unwanted emergent behaviors prevented? The official release mentions that the agent "decides to keep or cancel changes" based on the results of evaluation sets, but it does not describe the guardrails that prevent the system from converging toward false optima or optimizing proxies that do not correspond to real objectives.

The absence of a technical report in arXiv style, with details on architecture, model size, data mix, and training strategies, is an objective limit for anyone who wants to do an independent analysis. MiniMax has published technical documentation and the repository of the agentic project OpenRoom, but the details on the self-evolution pipeline remain proprietary. In a sector where verifiability is the foundation of scientific trust, this opacity is a choice with precise consequences: it makes it impossible to distinguish, from the outside, verifiable claims from those that remain in the domain of marketing.

Benchmarks: What the numbers say (and what they don't say)

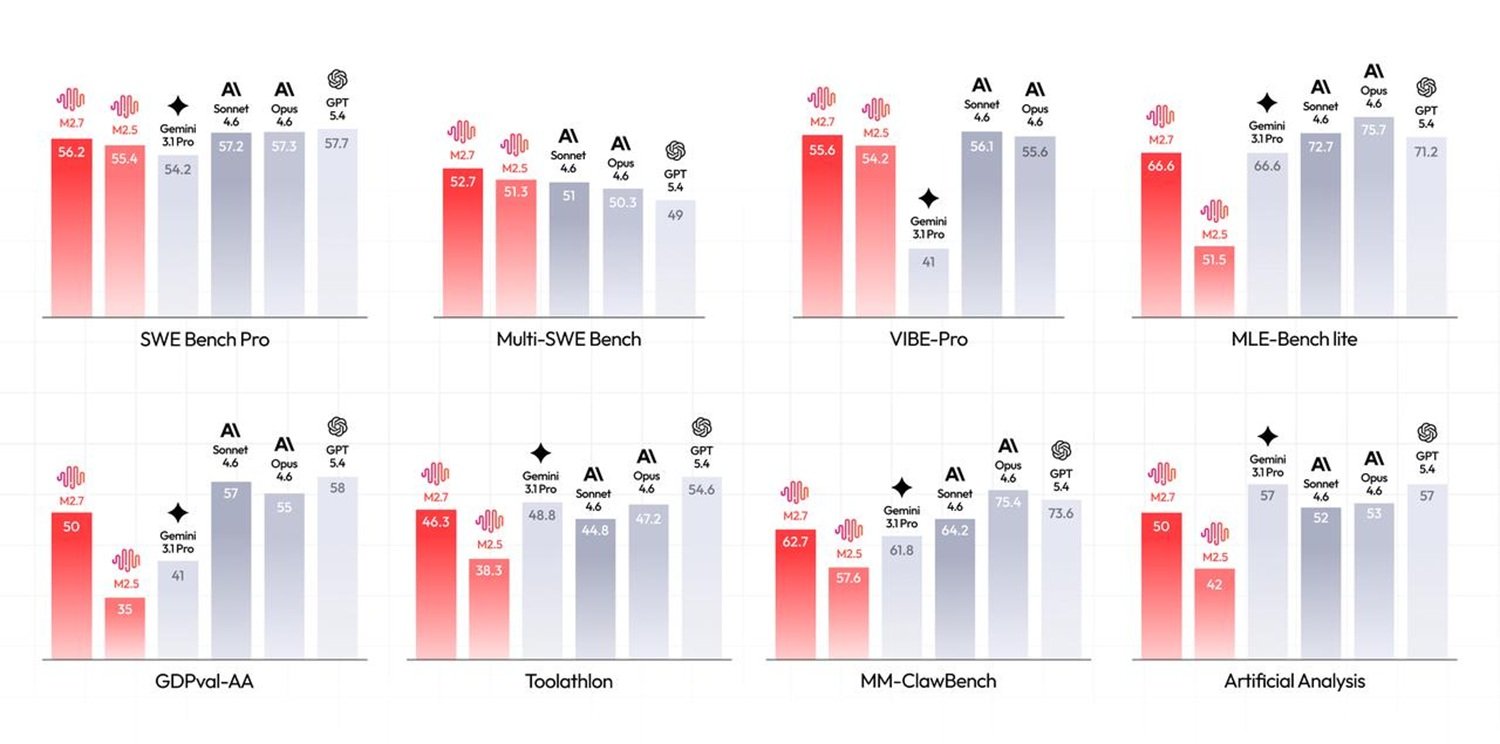

On the benchmarks declared by MiniMax, the data deserve a careful reading. On SWE-Pro, which evaluates software engineering capabilities on real multi-language problems, M2.7 reaches 56.22%, approaching the level of Opus 4.6. On VIBE-Pro, a benchmark for the delivery of complete end-to-end projects (web, Android, iOS, simulations), the score is 55.6%. On Terminal Bench 2, which measures the deep understanding of complex engineering systems, M2.7 reaches 57.0%. On GDPval-AA, the evaluation of the delivery capacity of professional tasks in domains such as finance, law, and office work, M2.7 obtains an ELO of 1495, the highest among open-source models, second only to Opus 4.6, Sonnet 4.6, and GPT-5.4 among all models tested.

Where independent data become more interesting is in the report from Kilo, an agentic coding platform that tested M2.7 on its own two benchmarks. On PinchBench, the benchmark for standard OpenClaw agent tasks, M2.7 scores 86.2%, placing 5th out of 50 models tested, less than 1.2 points behind Claude Opus 4.6 (87.4%). The jump from M2.5 (82.5%) is 3.7 points, enough to move it from the middle tier to the upper tier of the leaderboard. On Kilo Bench, 89 autonomous coding tasks across the entire spectrum, from git operations to differential cryptanalysis, from MIPS emulation to QEMU automation, M2.7 passed 47% of tasks, second only to Qwen3.5-plus (49%).

The most interesting datum that emerged from Kilo Bench is not, however, the raw pass rate, but the behavioral profile. M2.7 tends to read surrounding code extensively before writing: it analyzes dependencies, tracks call chains, gathers context from related files. This "investigator" approach pays off on tasks that require deep systemic understanding; Kilo cites as an example a SPARQL task where the model correctly identified that a filter on EU countries was an eligibility criterion, not an output filter, a subtle reasoning distinction that other tested models missed. But the same tendency toward over-exploration causes timeouts on tasks where speed matters more than depth: the median task duration for M2.7 is 355 seconds, higher than its predecessors. The cost in tokens is proportionally higher: about 2.8 million input tokens per trial on Kilo Bench, the highest value among tested models.

Voices from practical experience add another dimension. In the comments to the Kilo report, one user describes very disappointing experiences with M2.7 on migration tasks, complaining that the model tended to create fictional UI components instead of migrating existing elements, to insert TODO comments then completely ignoring them, and to refuse to continue migrations midway through the process. The same user reports significantly superior results with GPT-5.3-Codex, Claude 4.6, and GLM-5 on the same tasks. It is not a statistically representative sample, but it is a signal that M2.7's behavioral profile—strong on systemic understanding and contextual reasoning, potentially fragile on disciplined execution of structured plans—does not fit all development scenarios equally well.

Developers and Knowledge Workers: Who gains

The most concrete operational promise of M2.7 is in production diagnostics. The official release describes debugging scenarios in live environments where the model correlated monitoring metrics with deployment timelines, performed statistical analysis on trace sampling, autonomously connected to databases to verify hypotheses, identified missing index migration files in the repository, and proposed non-blocking solutions. MiniMax claims to have reduced production incident recovery time to less than three minutes on several occasions, compared to traditional manual processes.

It is a powerful claim, and not implausible for those familiar with distributed systems diagnostics: much of the time in a production incident is spent correlating information that already exists in different dashboards, different logs, different repositories. An agent that can navigate these spaces autonomously and build coherent causal hypotheses is genuinely useful. The mirror risk is "automation bias": the tendency to over-rely on agent-generated diagnoses even when they are incorrect, especially in high-pressure situations where time to verify is limited. A system that finds the right cause 90% of the time and fails 10% in a silent way is more dangerous than one that fails visibly.

For knowledge workers in finance and other professional domains, M2.7 introduces capabilities that deserve critical attention. The demonstration case shown by MiniMax concerns a TSMC analysis: starting from annual reports and earnings call transcripts, the model autonomously builds a revenue forecasting model, designs assumptions, produces a template-based PowerPoint presentation, and drafts an equity research report in Word. MiniMax reports that industry professionals rated the output as usable as a first draft to be entered directly into subsequent workflows.

This type of application—an agent that produces financial analysis almost autonomously—opens up non-trivial regulatory questions. MiFID II requires that financial consultancy services be provided by authorized entities. An analysis produced by an AI agent and presented to a client without adequate supervision and disclosure may constitute regulatory violations. Who is responsible for errors in an autonomously generated financial model? The correct answer is still "the human who decided to use it and present it," but the chain of responsibility becomes thinner as the process becomes more automated.

Price remains the strongest argument

Beyond all the talk about self-evolution, the datum that most concretely positions M2.7 in the market is the cost. API access is available at $0.30 per million tokens in input and $1.20 per million tokens in output for the standard version, with an M2.7-highspeed version that offers higher speed at the same price thanks to automatic caching. Claude Opus 4.6 costs $15 per million tokens in input and $75 per million tokens in output. The ratio is, respectively, 1:50 on input and 1:62.5 on output. For agentic applications that consume tens of millions of tokens per cycle, the difference is that between a feasible experiment and an economically prohibitive one.

Kilo synthesizes the practical positioning well: M2.7 is a strong choice when working on tasks that reward deep context gathering, complex refactoring, changes affecting the entire codebase, systemic analysis. For time-sensitive and well-circumscribed tasks, faster and less token-intensive models can give better results at lower costs. It is not a universal substitute; it is a tool with a precise profile.

OpenRoom and the new agent interface

Among the novelties accompanying M2.7 is OpenRoom, an interaction system based on agents that MiniMax has made open-source. OpenRoom frees interaction from pure text flow and places it in an interactive web GUI space: the characters are not static prompts but entities with persistent settings that actively interact with the environment, generating real-time visual feedback. Most of the code was written by AI, the repository's developer notes state.

The direction is clear: MiniMax imagines agents that inhabit spaces, not just respond to prompts. It is a vision that has interesting implications for gaming, the creator economy, and, on a more delicate level, for the psychological dynamics of prolonged interaction with artificial entities with persistent personalities. The risk of anthropomorphization and emotional attachment toward these systems is documented by academic literature at least since the work of Clifford Nass and Byron Reeves in the 1990s on the "media equation." It is not science fiction; it is developmental psychology applied to interactive systems.

The unresolved node: Opacity, governance and cultural narrative

There is a structural tension in the way M2.7 was presented to the world, a tension that is worth naming explicitly. MiniMax has built a powerful and memorable narrative around the "AI that evolves on its own," but it has provided very few verifiable technical details on how that evolution is governed. There is no public technical report. There is no disclosure on the security mechanisms of the self-optimizing agent. There is no explanation of how optimization loops that converge toward wrong proxies or unwanted emergent behaviors are prevented.

This is not necessarily bad faith: it is the norm in the sector. OpenAI, Anthropic, and Google do not publish exhaustive technical reports on all their internal systems. But when it is explicitly claimed that a model "participates in its own evolution" and autonomously manages ML research cycles, the level of detail expected from the community should be proportionally higher. The difference between a controlled optimization cycle and a system that escapes the objectives defined by its creators is exactly the kind of detail a technical report should clarify.

On the cultural narrative front, it is worth noting how the tech press has amplified the self-evolution aspect far beyond what the data justify. The frame of the "AI that improves on its own" is narratively powerful because it recalls deep archetypes, from Frankenstein's creature to the Golem of Jewish tradition, passing through Kubrick's HAL 9000, but accurate descriptions of the phenomenon are much more prosaic: a well-designed agent that optimizes a function on a specific task in a controlled environment. The difference between the two descriptions is the difference between literature and engineering. Both are useful, but they are not the same thing.

The regulatory issue remains in the background but does not vanish. The European AI Act, in its current framework, classifies high-risk AI systems based on their application domain and their potential impact. An agentic model that autonomously participates in training pipelines of other AI models, or that produces financial analysis with reduced human supervision, approaches categories that the AI Act tends to look at with particular attention. How M2.7 fits into this framework is a question to which no one has yet given a precise answer, neither MiniMax nor the European regulators.

M2.7 is a genuinely capable model, with independent benchmarks confirming its position in the leading group of the current landscape, a price that makes it accessible to projects that Western frontier models would make prohibitive, and an approach to self-optimization that is technically real, even if less spectacular than the public narrative suggests. The 30% autonomy in the internal RL workflow is a solid engineering result. It is not the birth of conscious AI. It is something more modest and more interesting: a signal that the development cycle of the models themselves is starting to include the models as active participants, with all that this entails in terms of speed, scale, and the need for new governance tools.

The most relevant question that M2.7 leaves open is not "how far can self-evolution go?", but "who decides what it means to improve, and with what transparency?". As long as that question remains without a public and verifiable answer, every claim about the AI that learns from itself should be read with curiosity, rigor, and a healthy dose of methodological skepticism.