100 Million Tokens: Memory or Mirage?

Let's try to reason through images. One hundred million tokens is not a number that the human brain can spontaneously visualize, so we need an anchor. A token, in the language of language models, corresponds approximately to three-quarters of an English word. Ten thousand tokens are roughly seventy-five pages of text. One million tokens represents the entire *Recherche by Proust, that seven-volume work that almost everyone pretends to have read. Ten million tokens cover the entire works of Shakespeare plus the Bible plus a few medium-sized encyclopedias. One hundred million tokens are something in the order of a small university library, say sixty thousand average-length novels loaded into a single active context, simultaneously available for a language model to answer questions.*

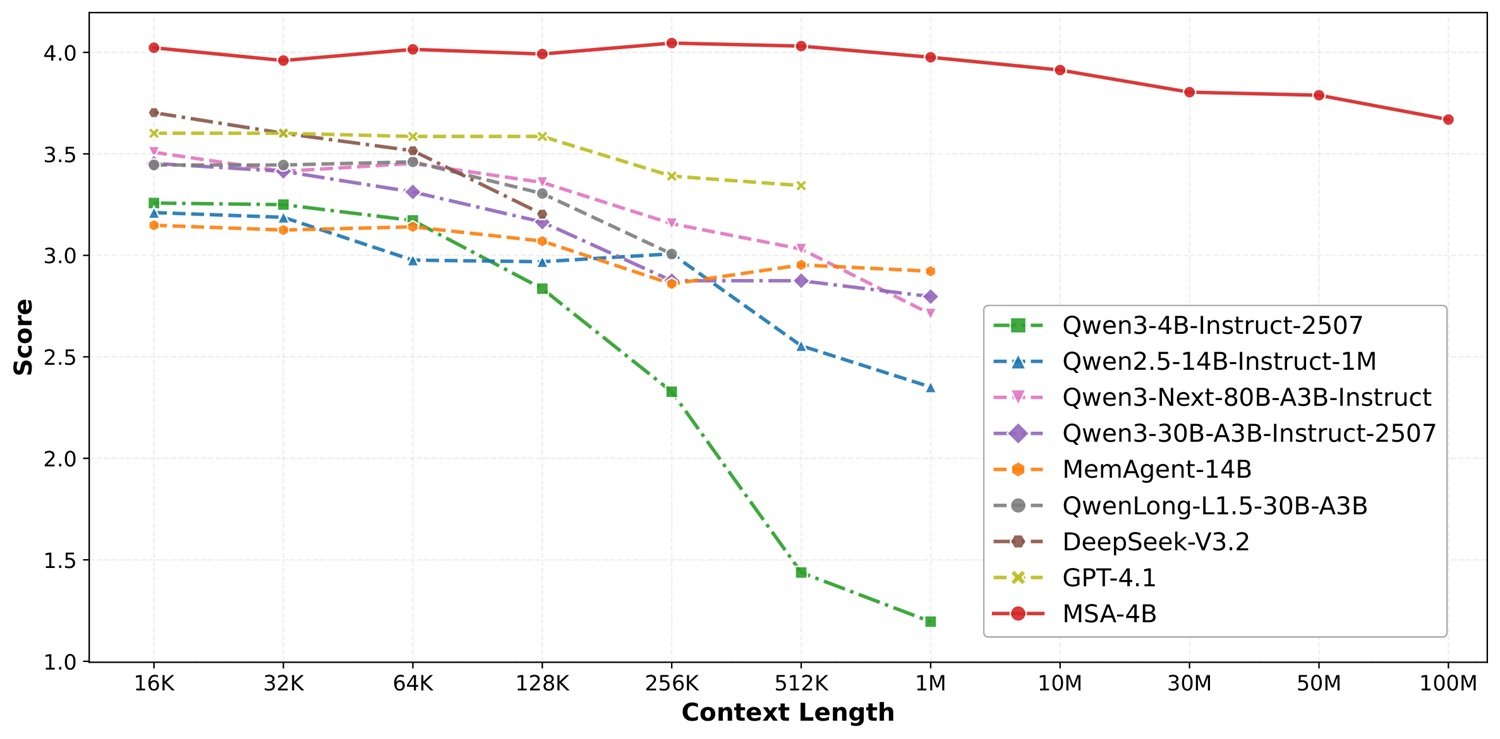

This is the central claim of the Memory Sparse Attention (MSA) project, published by the EverMind team in March 2026 on Zenodo and accompanied by a GitHub repository that currently counts over 3000 stars. The statement is clear: an architecture capable of scaling contextual memory up to 100 million tokens while maintaining less than 9% performance degradation compared to the starting point. For those working with language models in 2026, where the practical boundary of full attention still stands between 128,000 and one million tokens before things start to deteriorate seriously, this is a figure that deserves attention. It also deserves some questions.

The Bottleneck Holding Everything Back

To understand what MSA proposes, we must first understand why the problem exists. Transformers, the architecture on which practically all contemporary large language models rest, have a known structural flaw: full-density attention scales quadratically with respect to context length. In practical terms, this means that doubling the context does not double the computational cost—it quadruples it. At 128,000 tokens, the situation is already challenging; at one million it becomes prohibitive; at one hundred million it is simply impossible with standard architecture, regardless of the amount of GPUs deployed.

Existing solutions roughly fall into three families, none of which are satisfactory. The first is the compression of latent states, as in linear or hybrid architectures (Mamba, Jamba, and the like), which reduce computational complexity but tend to lose information progressively, like a photocopy of a photocopy of a photocopy. The second is external memory via RAG (Retrieval-Augmented Generation): a corpus of documents is kept outside the active context and relevant pieces are retrieved at query time. It works, it's already in production everywhere, but it introduces a structural discontinuity: retrieval and generation are two separate systems, trained separately, optimized separately, and this separation has a cost in terms of coherence and multi-hop reasoning. The third family is the brute extension of the context, which suffers from all the quadratic problems mentioned above.

MSA aims to occupy a fourth, previously little-explored space: a sparse attention mechanism trained end-to-end, which integrates retrieval directly into the Transformer architecture without breaking the differentiable chain. The intuition is brilliant. The execution requires examination.

The Two-Speed Brain

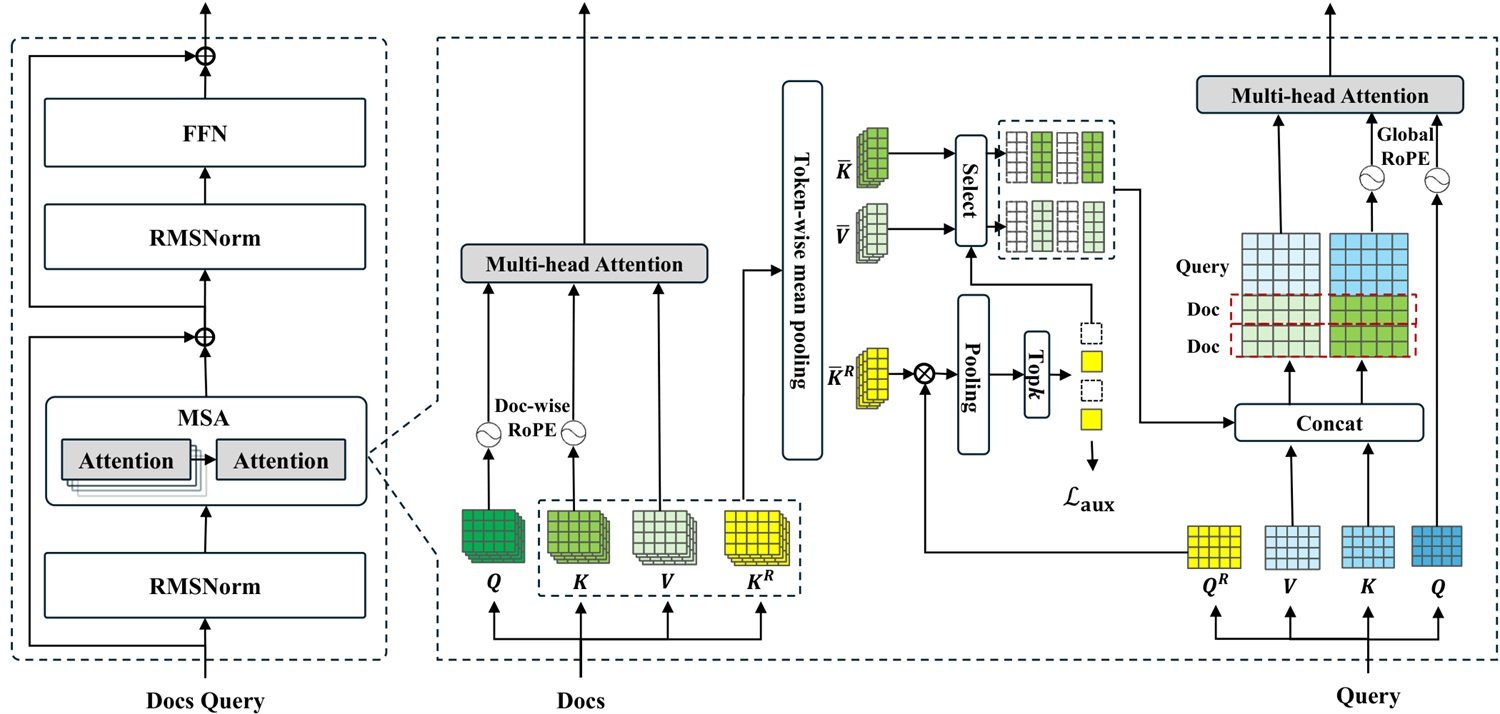

The MSA architecture is inspired, at least metaphorically, by something similar to the neuropsychological distinction between working memory and long-term memory. It is not an entirely new conceptual idea, but the way it is implemented has some original features.

The starting point is the physical separation of memory. When a document is processed by MSA, the model produces three types of compressed representations: routing keys (Kᵣ), content keys (K̄), and content values (V̄). Routing keys are highly compressed, lightweight vectors designed to do one thing: quickly answer the question "is this document relevant to the current query?". They are stored in GPU VRAM, where access latency is in the order of microseconds. The full content, K̄/V̄ keys and values, is instead moved to CPU system RAM, which is much more abundant and much less expensive, but slower. Only when the router decides a document is relevant is that content transferred from RAM to VRAM to participate in the attention calculation.

This scheme, which the team calls Memory Parallel, allows the routing phase to be distributed across multiple GPUs in parallel: each handles a portion of the routing keys, queries are broadcast, relevance scores are aggregated, and only the top-k selected documents are actually loaded. All of this, the authors claim, on a configuration of just two NVIDIA A800 GPUs.

The second relevant architectural element is document-wise RoPE, a variant of the rotary positional embeddings (RoPE) used in modern Transformers. The problem with standard RoPE in extremely long contexts is that the model, trained on short sequences, "doesn't know" how to interpret very high positions that appear during inference on long contexts, resulting in performance degradation. The solution proposed by MSA is simple: the position counter resets at the beginning of each document. Every text always starts from position zero, regardless of how many documents precede it in memory. This allows a model trained on 64,000-token sequences to extrapolate up to 100 million without ever seeing out-of-distribution positions.

The choice is intelligent, but it introduces a consequence worth noting: documents in memory become, from a positional standpoint, indistinguishable from each other in order. The model knows what is inside each document but does not have a positional signal indicating which document is "newer" or "older" in the absolute temporal sequence. For applications where chronological order matters, this is a real limitation that the paper recognizes only implicitly.

Memory Interleave: Reasoning or Intelligent Retrieval?

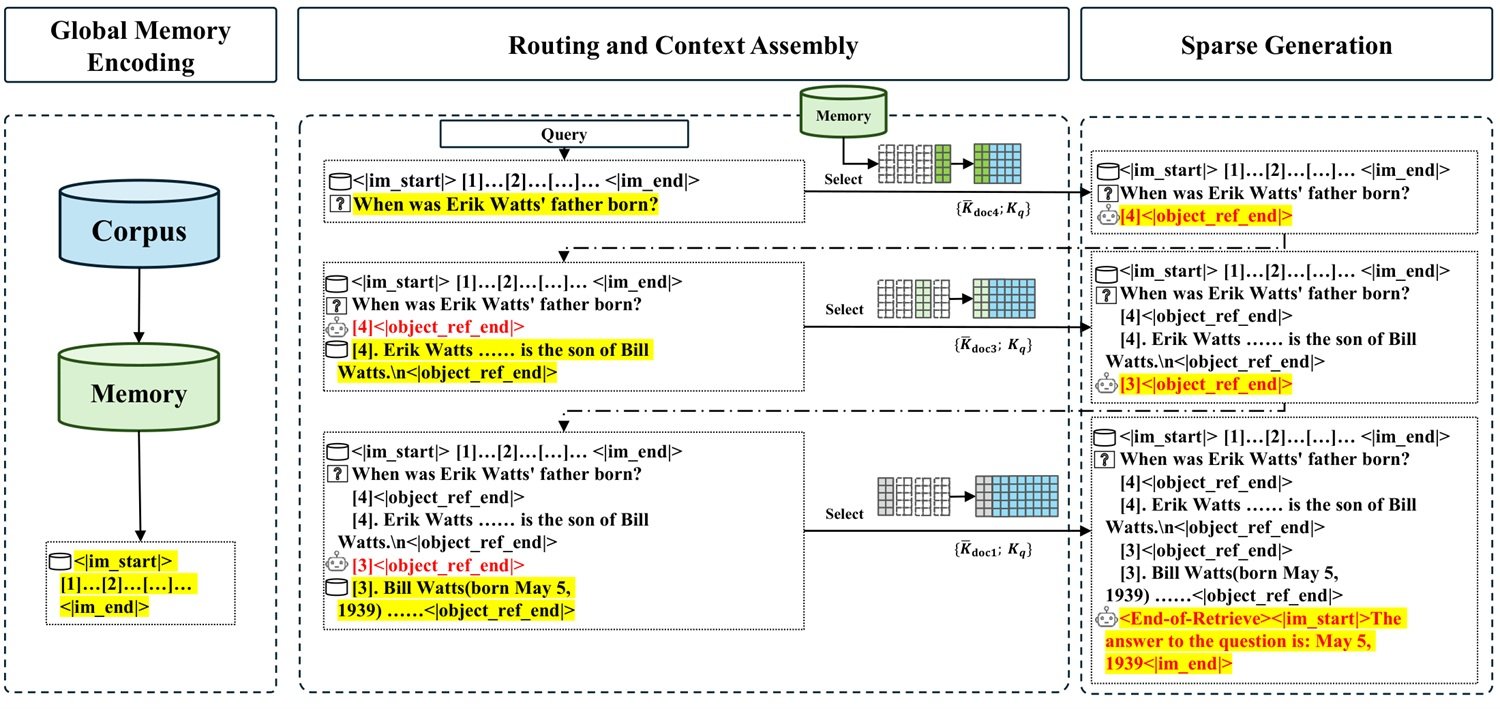

The third pillar of the architecture is probably the most interesting from a reasoning standpoint, and also the one that raises the most difficult questions. It's called Memory Interleave, and it addresses a problem that traditional RAG systems handle poorly: multi-hop questions.

A multi-hop question is one whose answer requires linking information scattered across different documents, where information in document B is necessary to understand which part of document C to search for. "Who was the director of the favorite film of the writer who won the Booker Prize in 1981?" is a trivial example: at least three steps are needed, and each step depends on the result of the previous one. A RAG system that retrieves documents only once, before generating, structurally struggles with this type of reasoning. It might retrieve five documents, hoping they contain everything necessary, but it's a blind bet.

Memory Interleave instead proposes an iterative cycle: the model alternates between "generative retrieval" phases (producing identifiers of the documents it wants to read), actual retrieval phases of those documents, and partial generation phases, until it has enough evidence to answer. The number of documents retrieved for each round is dynamically determined by the model itself, not by a fixed hyperparameter. Ablation experiments in the paper show that removing Memory Interleave causes an average drop of 5.3 percentage points on QA benchmarks, and 19.2 points on HotpotQA alone, a dataset specifically designed for two-hop reasoning.

These numbers are convincing, but a journalistically relevant question arises here that the paper does not explicitly solve: does Memory Interleave improve the model's reasoning, or simply improve retrieval? The distinction is not academic. If the effect is mainly that the model retrieves more useful information thanks to iterations, then the underlying reasoning capability remains that of the Qwen3-4B backbone, and the improvements on HotpotQA reflect smarter retrieval, not superior inferential capability. If, on the other hand, the iterative mechanism allows the model to build more complex reasoning chains than those it could build with all the information already present in the context, then we are facing something structurally new.

The paper does not provide experiments that clearly distinguish between these two scenarios, and the fact that MuSiQue—the multi-hop reasoning dataset with three or more steps—shows the lowest absolute score in the entire table (2.211 out of 5) suggests that as inferential complexity grows, the gains diminish. This is not a criticism; it is an open area that subsequent research will need to explore.

The Numbers Under the Microscope

Let's move on to the benchmarks, which deserve separate attention and a critical eye. The experimental setup described in the GitHub repository uses nine question answering datasets (MS MARCO, Natural Questions, DuReader, TriviaQA, NarrativeQA, PopQA, 2WikiMultiHopQA, HotpotQA, MuSiQue) with memory banks ranging from 277,000 to 10 million tokens, plus NIAH (Needle-in-a-Haystack, in the RULER version) tests up to one million tokens. The backbone is Qwen3-4B-Instruct, a four-billion-parameter model from 2025.

The results in comparison with RAG on the same backbone are genuinely impressive: MSA achieves an average score of 3.760 out of 5 compared to 3.242 for the best RAG with reranker, a relative improvement of 11.5%. On MS MARCO, the dataset with the largest memory bank (7.34 million tokens), the gap widens significantly: 4.141 against 3.032, about a 37% advantage. On HotpotQA, MSA outperforms RAG+reranker by about 4%. On NarrativeQA, however, it loses: RAG with reranker gets 3.638 against MSA's 3.395—the only dataset out of nine where MSA is not the best in the same-backbone comparison.

The comparison with RAG systems on much larger backbones (KaLMv2 with Qwen3-235B and with Llama-3.3-70B) is more nuanced. MSA with 4B parameters achieves the best absolute average (3.760) but loses on four out of nine datasets compared to the strongest configurations, and the average advantage over Qwen3-235B with reranker is 5%. In practice, a model sixty times smaller keeps pace with a 235-billion-parameter giant when the architectural advantage in memory management is large enough to compensate for the scale difference. It is a remarkable result, but it should be read without hyperbole.

However, two aspects of the experimental setup deserve to be explicitly stated, because they condition how to read the numbers. First: the evaluation metric for QA benchmarks is an LLM judge assigning scores from 0 to 5. While this type of evaluation has become standard in the field, it carries intrinsic variability, and the fact that the judge model is not specified in the README adds an opacity that would not be acceptable in a peer-reviewed paper. Second: the main scalability benchmark, the 16K→100M token curve with less than 9% degradation, is measured on MS MARCO, a single dataset. The generalization of that curve to other types of content, question distributions, or languages other than English remains to be proven.

On the NIAH front, the results are clearer and easier to evaluate: MSA maintains 94.84% accuracy at one million tokens, while the unmodified backbone collapses to 24.69%. Hybrid linear attention models (the most direct competition) show appreciable degradation as early as 128,000-256,000 tokens. This is the paper's solidest result, as the NIAH benchmark is standardized, widely used in the community, and leaves little room for interpretative ambiguity.

At the time of writing, the code is publicly available on the GitHub repository, with installation instructions, the MSA-4B model downloadable directly from Hugging Face, and benchmarks replicable via scripts. This is a non-trivial sign of maturity: it means the results are in principle verifiable by anyone with the appropriate hardware, and the community can start testing the system on its own use cases.

100 Million Tokens on 2×A800: At What Price?

"Near-linear complexity" is one of the most overused phrases in the Transformer research vocabulary, and almost always requires translation. In MSA's case, the formal complexity of O(L) refers to the routing and sparse attention phase, but it hides a series of concrete costs that a serious article cannot ignore.

The first cost is fetch latency from CPU memory. When the router decides which documents to load, that data must be transferred from system RAM to GPU VRAM via the PCIe bus, which has a typical bandwidth of 16-32 GB/s. For one hundred million tokens, even with aggressive KV cache compression, the data sizes to transfer per query can be in the order of gigabytes. The paper does not report explicit end-to-end latency numbers for single queries, and this is a relevant omission for anyone wanting to evaluate production feasibility.

The second cost is the offline encoding phase. Before MSA can answer any questions about a corpus, it must process all documents in that corpus to produce the K̄, V̄, and Kᵣ representations. This is a one-time cost for static corpora, but it becomes a recurring problem for frequently updating corpora, such as conversation histories in an agent application or real-time business data feeds. The paper describes this as offline encoding, suggesting the architecture is primarily intended for relatively stable corpora.

The third cost is persistent memory. A corpus of 100 million tokens, even with aggressive compression, requires significant amounts of system RAM. Without specific figures from the paper on actual compression ratios and the resulting sizes of the compressed KV cache, it is difficult to estimate precise infrastructure costs, but for an enterprise deployment on real corpora, we are likely looking at hundreds of gigabytes of RAM. Not impossible, but not trivial.

This leads to the central question, which is worth formulating explicitly: does MSA solve the memory bottleneck, or does it shift the complexity to storage, retrieval, and orchestration? The honest answer is that both are true at the same time. MSA genuinely alleviates the quadratic complexity problem of attention, integrating retrieval in a differentiable way and obtaining better results than RAG on practically all tested benchmarks. But it does not eliminate architectural complexity: it distributes it across different components, some of which (CPU-GPU transfer, RAM management, offline encoding) are not yet well documented in the available public material. For a research team, it is real progress. For an engineering team that needs to bring it into production, open questions remain.

EverMind: Memory as a Service

It's worth understanding who is behind MSA, as the project's reading changes significantly depending on the context. EverMind is a Singapore-based startup founded with the stated goal of building long-term memory infrastructure for AI agents. Its main product is EverOS, a memory management system for agents that integrates various techniques, including MSA, into a broader platform. At the end of March 2026, the team published results on four memory benchmarks (LoCoMo, LongMemEval, PersonaMem, and a proprietary evaluation framework), claiming state-of-the-art performance.

The trajectory is that of a startup building what is known in industry jargon as "Memory-as-a-Service": a layer of abstraction that sits between base language models and applications, managing persistence, retrieval, and the organization of agent memories over time. It is a consistent industrial positioning, and MSA is its technical foundation.

The fact that the paper was published on arXiv, rather than a minor venue, and that the code is already publicly available on GitHub, with the MSA-4B model downloadable directly from Hugging Face and benchmarks executable via script, is a non-trivial sign of maturity. The results are in principle verifiable by anyone with the appropriate hardware, and the community can start testing the system on its own use cases. The research is communicated publicly and technical assets are open: for an early-stage startup, this is not the norm, and it is an element that weighs positively in the overall evaluation of the project.

The thirteen authors of the paper (including Chen Yu, Chen Runkai, Yi Sheng, and others, with Lidong Bing and Tianqiao Chen as senior figures) represent a team of credible size and profile for the type of work described. There are currently no independent evaluations of the results by third-party research groups, which is normal for a paper from just a few months ago, but it is an element to keep in mind when reading the improvement percentages.

Elegant Proof-of-Concept or Ready System?

This is the question every honest article about this type of research must ask, and the answer here is bittersweet. MSA is, at present, a convincingly described system, with credible benchmark results on a test set wide enough not to be dismissed as cherry picking, and with an architecture that has a coherent and well-motivated internal logic. It is not an empty promise.

At the same time, it is probably not yet a production-ready system in the engineering sense of the term. The 100-million-token scalability benchmarks are demonstrated on only one dataset (MS MARCO), and the actual degradation on other types of content at that scale is unknown. Performance on NarrativeQA—the dataset with the longest stories and most contextual understanding—is the only case where MSA loses to RAG with reranker, suggesting that dense narrative content might be a weak point. End-to-end query latency, corpus encoding cost, and system RAM requirements are not communicated with the precision necessary for an engineering evaluation.

There is also a more subtle question about portability. All results are obtained on Qwen3-4B, a specific backbone with specific characteristics. MSA requires training the router and the sparse attention mechanism end-to-end: it is not something that can be plugged into any pre-trained model like a plugin. The paper describes training on 158.95 billion tokens with an auxiliary routing loss, followed by two stages of supervised fine-tuning with curricula from 8,000 to 64,000 tokens. Replicating this process on a different backbone (Llama, Mistral, Gemma) would require considerable resources and time, and it is not guaranteed that the results would transfer identically.

Disco Elysium, the narrative role-playing game by ZA/UM, builds its memory system on fragments, whispers, and memories that the protagonist retrieves iteratively and often unreliably to reconstruct a larger story. MSA, in some way, does something similar: it retrieves scattered fragments from an enormous library, assembles them iteratively, and tries to build a coherent reasoning. The difference is that in the game, retrieval failure is part of the story; in a production system, it's a bug. How often MSA fails to retrieve the right fragments, and with what consequences, is exactly the type of question that only public code and independent experimentation can answer.

MSA deserves attention, study, and direct experimentation. The question of whether it truly solves the memory bottleneck or simply shifts it to infrastructure, storage, and orchestration does not yet have a definitive answer, and probably won't until someone outside EverMind has been able to dismantle and reassemble it on a real use case. In the meantime, it is one of the most interesting architectures to appear this year in the memory space for language models, and the GitHub repository is already worth keeping under observation.