AI at Home: LM Studio and Qwen 3.5 on My PC - Episode 2

Continues from Episode 1, where we described the hardware configuration, the choice of LM Studio as the framework, and the first three tests: scientific reasoning on the Higgs mechanism, multimodal reading of a blurry spreadsheet, and code generation for an NP-hard problem.

The conversation around Qwen 3.5 over the last two weeks has not remained confined to international technical forums. In Italy, voices like that of Salvatore Sanfilippo, among the most followed experts on the subject of applied artificial intelligence, have brought the model to the attention of a wider audience, helping to make this release one of the most discussed topics of the season in the Italian AI ecosystem. It's not social media hype: it's the recognition that something structural is changing in open-weight models, and that change is finally tangible enough to deserve attention outside of researcher circles.

The three tests that conclude this second episode were designed precisely to touch upon the areas of greatest interest to those who are not researchers but use AI to work, plan, analyze, and organize: the ability to reason in multiple languages while maintaining cultural coherence, the management of long documents with surgical precision, and the understanding of physical space through an image.

Test 4 — Travel Agent in Three Languages

The fourth test focused on two of the model's most advertised capabilities: extensive multilingual support (Qwen 3.5 supports 201 languages) and agentic performance on complex planning tasks. I imagined a French client who doesn't speak English, interested in visiting Tokyo and Kyoto with a focus on historical temples and street food. The request was articulate: a five-day itinerary in impeccable French, with practical advice on transport and language barriers, followed by a section in Italian for a second traveler who wanted to follow the same path.

The response could have been a generic itinerary generated by interpolating information from a database of travel guides. That was not the case. The French was that of a high-end travel consultant: formal but warm, precise without being bureaucratic. The itinerary had real logistics: arrival in Tokyo and first immersion in Asakusa and Senso-ji, second day between the Meiji Shrine and the old Tsukiji market with the indication that sushi is eaten at the counter there, paying per unit, third day with shinkansen to Kyoto and a walk in the bamboo woods of Arashiyama in the late afternoon, fourth day with the climb to the torii of Fushimi Inari with an explicit warning to wear comfortable shoes, evening in Pontocho for the possibility of crossing paths with geishas. Fifth day at the Nishiki market, "Kyoto's belly," as the model called it, before departure.

The details make the difference between information and knowledge: knowing that Suica and Pasmo are the rechargeable cards for transport, that Google Translate with offline packages is almost indispensable in Japan, that in temples you take off your shoes. All present, all correct. The Italian section was a practical summary, written in a fluent and useful language, without pedantically repeating the entire itinerary but summarizing the essential tips for someone who already knows the route. The transition from one language to another did not lower the quality: tone, cultural relevance, and accuracy remained stable.

Grade: 5/5. An agent who knows Japan like a tour guide, writes in French like a native speaker, and summarizes in Italian without losing the thread.

Test 5 — The Needle in the 460-Page Haystack

The fifth test was the most demanding from a technical point of view, and probably the most relevant for those who use AI in professional contexts of document analysis. I loaded the Artificial Intelligence Index Report 2025 from Stanford HAI into LM Studio: 460 pages and about 20MB, tens of thousands of words, charts, tables, thematic chapters. A volume that no human reads from beginning to end in one session. The question was seemingly simple: find me the data on the growth of video generation and tell me what page they are on.

The first time, no answer. The second, silence. The third, again. In all three attempts, the model performed the reasoning, which is visible and consultable, but at the end it did not produce the output. I had to explicitly prompt, specifying that sometimes the model does not produce output in chat even though it has processed the request. On the fourth attempt, the answer arrived and was surprisingly precise.

The model identified pages 126 and 127 of Chapter 2 (Technical Performance), "Image and Video" section. It described what they contained: page 126 with the charts of the Google Veo, Meta Movie Gen, and OpenAI Sora models, with user preference graphs (Figures 2.3.11 and 2.3.12); page 127 with the comparison between videos generated over time. And then it spontaneously recovered a specific example: the "Will Smith eating spaghetti" prompt, which has become a small informal case study on the quality of AI-generated videos over time—the kind of cultural detail a good researcher would have included in a footnote.

The blocking behavior of the first three attempts is a real limit, to be reported honestly. It probably depends on the volume of data to be processed and how LM Studio handles context tokens in very wide windows. It's not a problem that can be solved in five minutes; it requires understanding of your setup and patience. But when the model responds, it responds well.

Grade: 4.5/5. Pinpoint accuracy in retrieving information from a 460-page document, exact pages, numbered figures, cultural examples. Half a point lost for the three empty attempts, a behavior that real workflow must take into account.

Test 6 — The Surveyor of Domestic Chaos

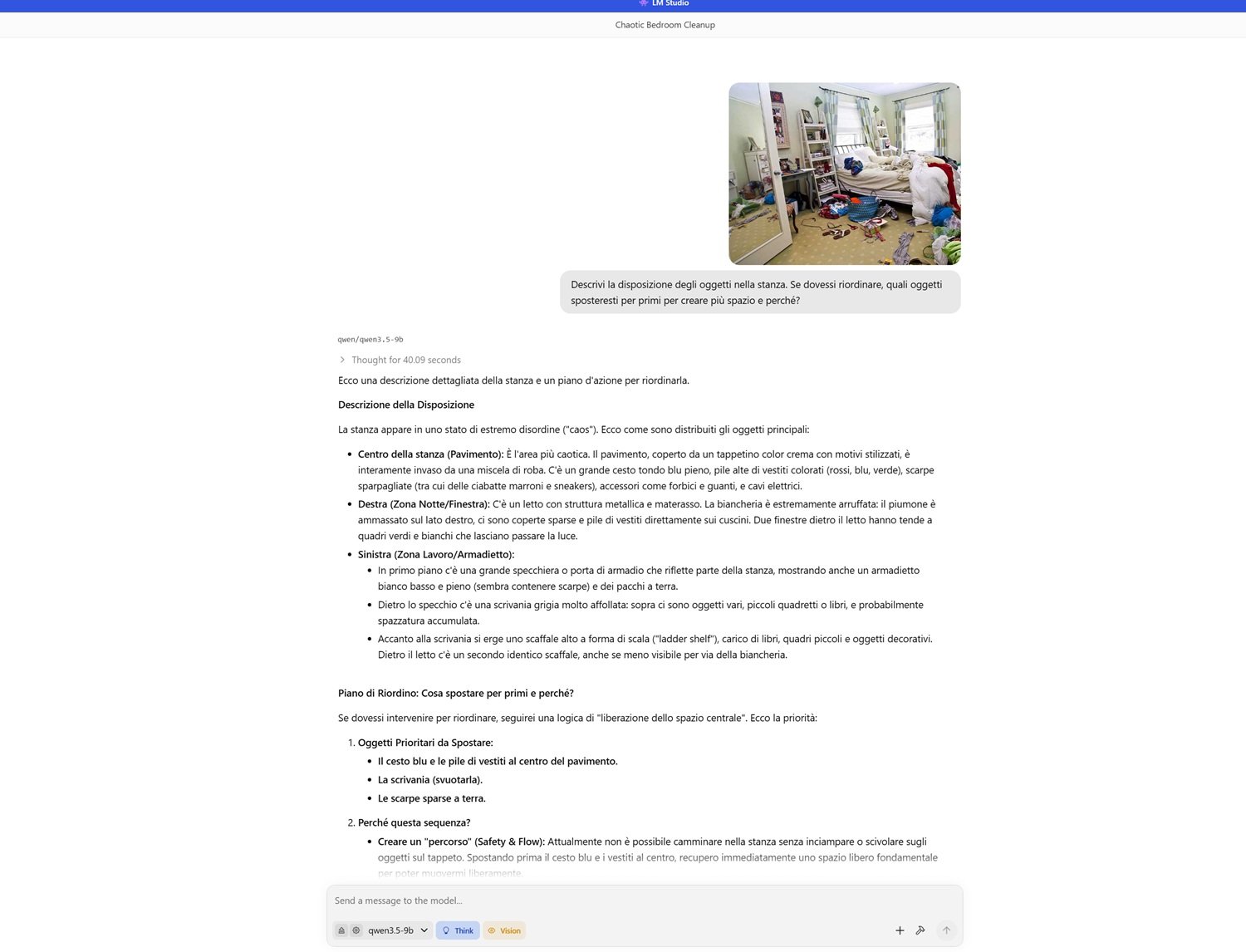

The last test was perhaps the most unusual, and the one that produced the most narratively rich response. I downloaded a low-quality photo online of a room in a state of disorder: clothes everywhere, an unmade bed, a desk submerged in paper, saturated shelves, objects scattered on the floor. I uploaded the photo to LM Studio and asked the model to describe the arrangement of the objects and propose a strategy to gain space.

The description of the room was visually faithful: the blue basket in the center occupying the main path, the piles of colored clothes divided by colors and materials, the brown slippers and sneakers scattered near the entrance, the bed on the right with piled-up linen making the nightstand inaccessible, the desk on the left "crowded like a nest of visual clutter." But the most impressive detail was this: the model noticed that the mirror on the wall reflected the white cabinet and some boxes on the floor, demonstrating that it perceived not only the visible objects but the spatial relationships generated by reflections—a three-dimensional understanding of space that was not a given.

The proposed tidying strategy followed an impeccable logic: first clear the center of the room to create a safe path, then empty the desk to categorize, then make the bed to regain visual surface, finally store things in the now accessible cabinets. Each step had a motivation: the center first because it is the most immediate fall risk, the bed then because making it visually changes the perception of the entire room, not just its functionality. It is the logic of someone who understood not only what is in that room, but how the space works for those who live there.

Grade: 5/5. Three-dimensional spatial understanding, analysis of reflections, step-by-step motivated intervention strategy. An interior designer would not have done better.

The Final Account

Six tests, six areas, a fairly complete picture to draw conclusions, with the awareness that this remains a personal experiment, not a systematic evaluation.

What emerges clearly is that Qwen 3.5 9B, at 30 tokens per second on a consumer GPU with 16 GB of VRAM, does things that up until a year ago would have required access to expensive frontier APIs. It explains quantum physics with the clarity of a good teacher, reads blurry tables like an analyst, writes code with theoretical awareness of limits, plans multilingual trips with cultural coherence, finds specific pages in a 460-page report, describes a messy room and recognizes its reflections. All this runs offline, without sending a single byte to any server.

The limits are there and must be stated without discounts. The blocking behavior on very long outputs or extended contexts is the main problem: it requires explicit prompts and introduces uncertainty in the workflow that those using these tools in production must manage. The first interrupted attempt in the coding test, the three silences in the document test, are not negligible defects; they are behaviors that a professional user must learn to anticipate.

There also remains an open question that no local test can solve: privacy and the origin of the training data. Qwen is a project of Alibaba Cloud, a Chinese company subject to Beijing's legislation. Running the model locally solves the issue of data transmission during inference—the prompts do not leave the machine—but says nothing about what the model saw during training, nor about any potential biases related to the geopolitical context of those who created it. For many personal and professional uses, the question is irrelevant; for others, in regulated areas, in contexts where data sovereignty is a legal constraint, it is worth reflecting on it before integrating it into a critical workflow.

On the cloud front, competition remains asymmetric for tasks requiring deep multi-step reasoning, encyclopedic knowledge updated in real-time, and management of massive contexts without unpredictable behavior. Frontier models like Claude, ChatGPT, and Gemini still play on a different field for these scenarios. But the gap narrows with every release, and the direction is clear.

The Desire to Continue

This experience was what I hoped it would be: instructive, concrete, at times surprising. Installing a model of this quality locally, on a PC that is not a five-thousand-euro workstation, and getting responses that hold up against the best cloud services, would have seemed out of reach only twelve months ago. It isn't anymore.

Qwen 3.5 9B is certainly the most discussed open-weight model of recent weeks, and the fame it had built with previous versions of the family was not unfounded. But it is also just one of the points of this rapidly evolving ecosystem. For those with less VRAM or seeking excellence in coding, Microsoft's Phi-4-mini deserves attention. For those working primarily in Italian or European languages, the variants of Mistral have specific interesting features. Each model excels in something and gives way in another: the choice always depends on the use case, and the use case is known only to the one in front of the keyboard.

The point, however, is not which model to choose. The point is that this choice exists, is accessible, and works. Local LLMs, or SLMs if you prefer the more precise designation, are no longer an experiment for enthusiasts with laboratory hardware. They are current, functioning, improvable tools that respect privacy and that, with a hardware level just above the standard consumer one, become powerful allies for designing, writing, analyzing, and building.

You just need to be willing to get your hands dirty. And with these tools, hands get less and less dirty.