Stanford AI Index Report 2026: AI Accelerates, Governance Slows Down

Fifty-three percent global adoption in three years, faster than the internet and the personal computer. Eighty-eight percent of organizations claiming to use AI. A programming benchmark, SWE-bench Verified, jumping from 60% to nearly 100% in twelve months. Private investment in the US at $285.9 billion, twenty-three times that of China. A fifty-percentage-point gap between what experts expect from AI and what the public thinks. These five numbers frame the perimeter of the 2026 AI Index Report from Stanford HAI: the ninth edition of a document that serves as a ruthless mirror of a sector accelerating much faster than anyone can measure, regulate, or socially absorb it.

Structured into eight chapters—research and development, technical performance, responsible AI, economy, science, medicine, education, governance, and public opinion—and powered by data from Epoch AI, LinkedIn, GitHub, McKinsey, and the OECD, the report opens with a premise that is almost a confession: "The data doesn't point in a single direction. It reveals a field scaling faster than the systems around it." This is not rhetoric. It is the thread that runs through the entire document.

Numbers That Don't Lie

Before diving into the details, it's worth pausing on what the report communicates most strongly. Generative AI has reached 53% adoption across the population in less than three years: to do the same, the personal computer took over a decade. 91.6% of "notable" models in 2025 were produced by private industry, compared to only one academic model identified in the entire year. The university, which built the foundations of this field, is now almost irrelevant in the production of systems at the frontier of knowledge. The economic value of generative AI tools for American consumers alone reached $172 billion annually, with the average value per user tripling in a year, and nearly all of it accessible for free: one of the most asymmetrical technological value transfers in recent history.

The Jagged Frontier: Where Models Excel and Where They Still Fail

There is an image in the report worth a thousand charts. Google's Gemini Deep Think won a gold medal at the 2025 International Mathematical Olympiad, competing against the best high school mathematicians on the planet. The same model, or models of the same level, reads an analog clock correctly only 50.1% of the time. In practice, little better than a coin toss.

This is the concept of the jagged frontier, which the report uses as an interpretative key for the current moment. AI systems are neither omnipotent nor trivial: they are extraordinarily capable in certain domains and surprisingly fragile in others, often without an intuitive logic guiding the distinction. Olympiad mathematics is a structured, symbolic task with precise and verifiable rules. Reading a clock requires spatial perception and visual-semantic mapping, which remains difficult for current models.

Where has progress been most evident in the last year? In code: the SWE-bench Verified benchmark, which measures the ability to resolve real issues on GitHub repositories, went from 60% to nearly 100% relative to the human baseline in just one year. In autonomous agents: on OSWorld, tests on real tasks simulating the use of a computer with an operating system, the success rate jumped from 12% to about 66%. In scientific benchmarks: several frontier models now match or exceed the human baseline on doctoral-level questions in physics, chemistry, and mathematics.

Where do the limits remain deep? In physical robotics: robots succeed in only 12% of domestic tasks, despite reaching 89.4% in simulations in controlled environments. The gap between the lab and a real kitchen is still an abyss. And in reasoning that requires contextual judgment, common sense, or understanding of communicative subtexts: here models show the most evident cracks, those that least lend themselves to being measured by a benchmark.

The comparison with 2025 is illuminating. Last year, the report documented the arrival of AI as a mainstream force. This year, it documents what happens after the arrival: benchmark saturation (more and more models pass them, making them less informative), increasing opacity of labs (less transparency on parameters, datasets, compute), and a divergence between the capabilities claimed by developers and those verified by independent tests. It's the difference between a film debut and the sequel: bigger, more expensive, but with fewer surprises.

USA vs. China: The Overtaking That Isn't (Yet)

The geopolitical chapter of the report is probably the most followed by political and industrial decision-makers. And the 2026 data confirms a trend that those following this sector had already guessed: the American advantage in frontier models has narrowed to an almost symbolic distance.

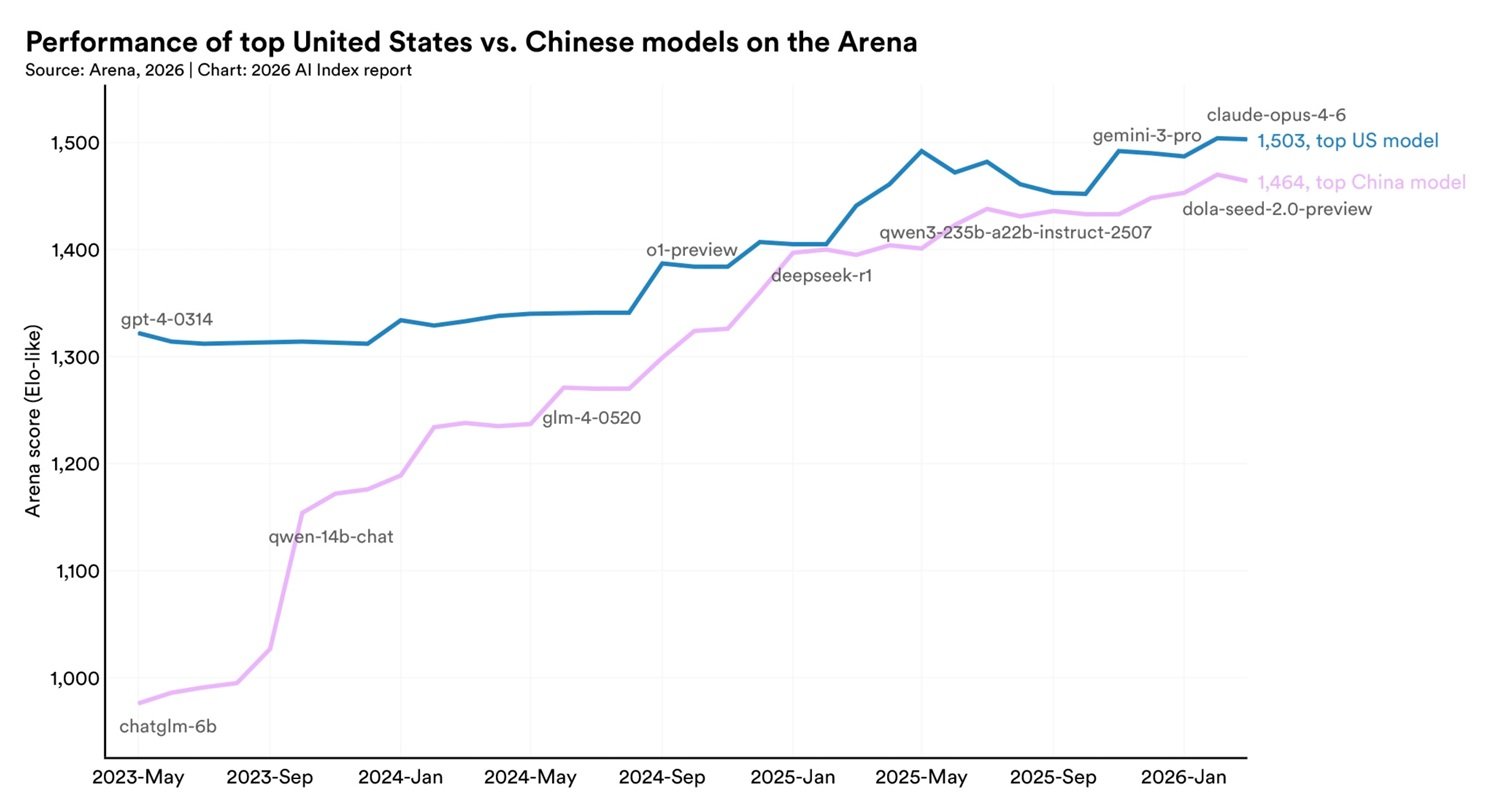

In February 2025, DeepSeek-R1 briefly equaled the best available American model. In March 2026, Anthropic's flagship model leads the rankings with a margin of 2.7% over the best Chinese model. In a field where benchmarks are updated every week, 2.7 percentage points is not a strategic advantage: it's statistical noise. Models from the two countries have swapped the top spot multiple times over the last year.

This data must be read alongside the broader structure of the competition. The United States remains ahead in the production of notable models—50 in 2025 against China's 30—and in high-impact patents. China, on the other hand, leads in the volume of scientific publications, citations, overall patent output, and industrial robot installations. Beijing increased its share of the 100 most-cited AI articles in the world from 33 in 2021 to 41 in 2024. South Korea emerges as an outlier of innovative density: first in the world in AI patents per capita.

The picture is one of technological competition transformed into armed parity rather than unilateral dominance. Anyone reading this data in light of chip export blocks and the semiconductor war cannot help but wonder if those measures achieved the desired result. The answer emerging from Stanford is uncomfortable: probably not.

On AITalk, we have followed this trajectory closely. Last year we documented the capabilities of Zhipu AI's GLM-5, a Chinese model that already showed competitive performance on multimodal benchmarks. In the analysis of the AI war between the USA and China, we highlighted how American restrictions were paradoxically accelerating Chinese technological autonomy in the field of chips and models. Kimi K2 by Moonshot AI and especially DeepSeek, with its ability to produce competitive models at drastically lower costs, made that prediction real. The Stanford report only adds the quantitative seal to a story that was already being written.

One element the report adds to this scenario is the American talent drain, which represents perhaps the most underestimated crack in US primacy. The number of AI researchers and developers moving to the United States has collapsed by 89% compared to 2017, with an 80% drop in the last year. The United States remains the country with the largest stock of AI talent, but it attracts new brains at the lowest rate in ten years. It's the kind of indicator that doesn't impact today's benchmarks but defines the trajectory of the next five years.

The World's Fragile Infrastructure

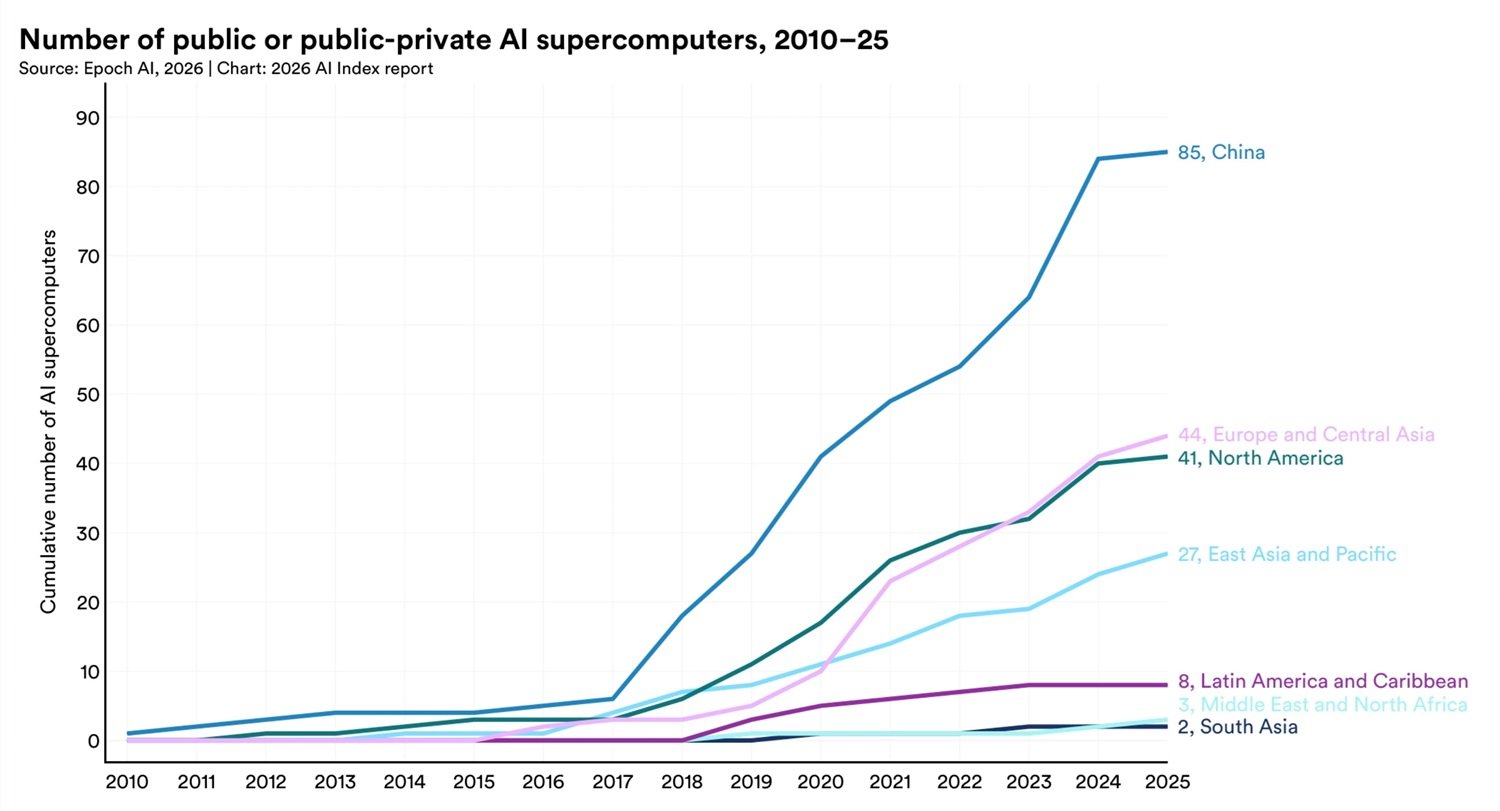

Behind every response generated by an AI model is a material supply chain of enormous proportions and underestimated structural fragility. The United States hosts 5,427 AI data centers, more than ten times any other country, and consumes more energy than any other region for this purpose. Global processing capacity for AI has grown 3.3 times per year since 2022, reaching the equivalent of 17.1 million Nvidia H100 cards. Nvidia controls over 60% of this compute.

The most geopolitically relevant datum, however, is another. Nearly all the chips that power this infrastructure are produced by a single company: TSMC, based in Taiwan. The report states it without periphrasis: the global AI hardware supply chain depends on a single foundry on a contested island. There are few comparisons for this concentration of systemic risk—perhaps European energy dependence on Russian gas before 2022—but with one crucial difference: there is no ready-made alternative supplier for AI chips. TSMC started its first expansion in Arizona in 2025, but American capacity remains a fraction of Taiwanese capacity.

The environmental impact completes the picture. The training of Grok 4 produced approximately 72,816 tons of CO₂ equivalent. The power capacity of AI data centers reached 29.6 gigawatts, comparable to the peak consumption of the entire state of New York. Estimates of the water consumption of GPT-4o inference indicate annual use that could exceed the needs of 12 million people. AI is not a virtual industry: it has a physical body, and that body is growing faster than our energy infrastructures.

Work, Adoption, and Value: Who Wins, Who Loses

The economy chapter of the report is the most anticipated by non-technicians, and this year it brings more granular data than usual. Productivity in sectors such as customer support and software development has increased by between 14% and 26% in contexts where AI has been introduced structurally. The effects, however, are not uniform: gains are strongest in routine tasks and weaker, or even negative, in tasks requiring complex judgment.

The most discussed datum concerns young American developers. In the 22-25 age bracket, employment in the software sector fell by nearly 20% in 2024, while the number of senior developers continues to grow. The report avoids making causality out of correlation, but the association is hard to ignore: AI productivity gains are concentrated exactly in the tasks typically assigned to junior profiles, and the employment impact materializes exactly in that bracket. It's as if AI were compressing the entry level of the profession, erasing the apprenticeship path that for decades transformed graduates into expert developers.

The adoption model has surprising geographic characteristics. Singapore is at 61%, the United Arab Emirates at 54%, both above expectations relative to GDP per capita. The United States, home to the main AI developers, ranks 24th with 28.3%: proximity to the industry is not enough to generate widespread adoption.

Governance, Security, Transparency: The Systemic Delay

The most worrying chapter of the report is not the one on technical capabilities, but the one on responsible AI. Almost all major labs publish results on capability benchmarks. But coverage of security, fairness, and governance benchmarks remains sporadic and uneven, making any systematic comparison impossible. Documented AI-related incidents rose to 362 in 2025, from 233 in 2024. And the report signals an insidious technical problem: improving one dimension of responsible AI, such as security, can degrade another, such as accuracy.

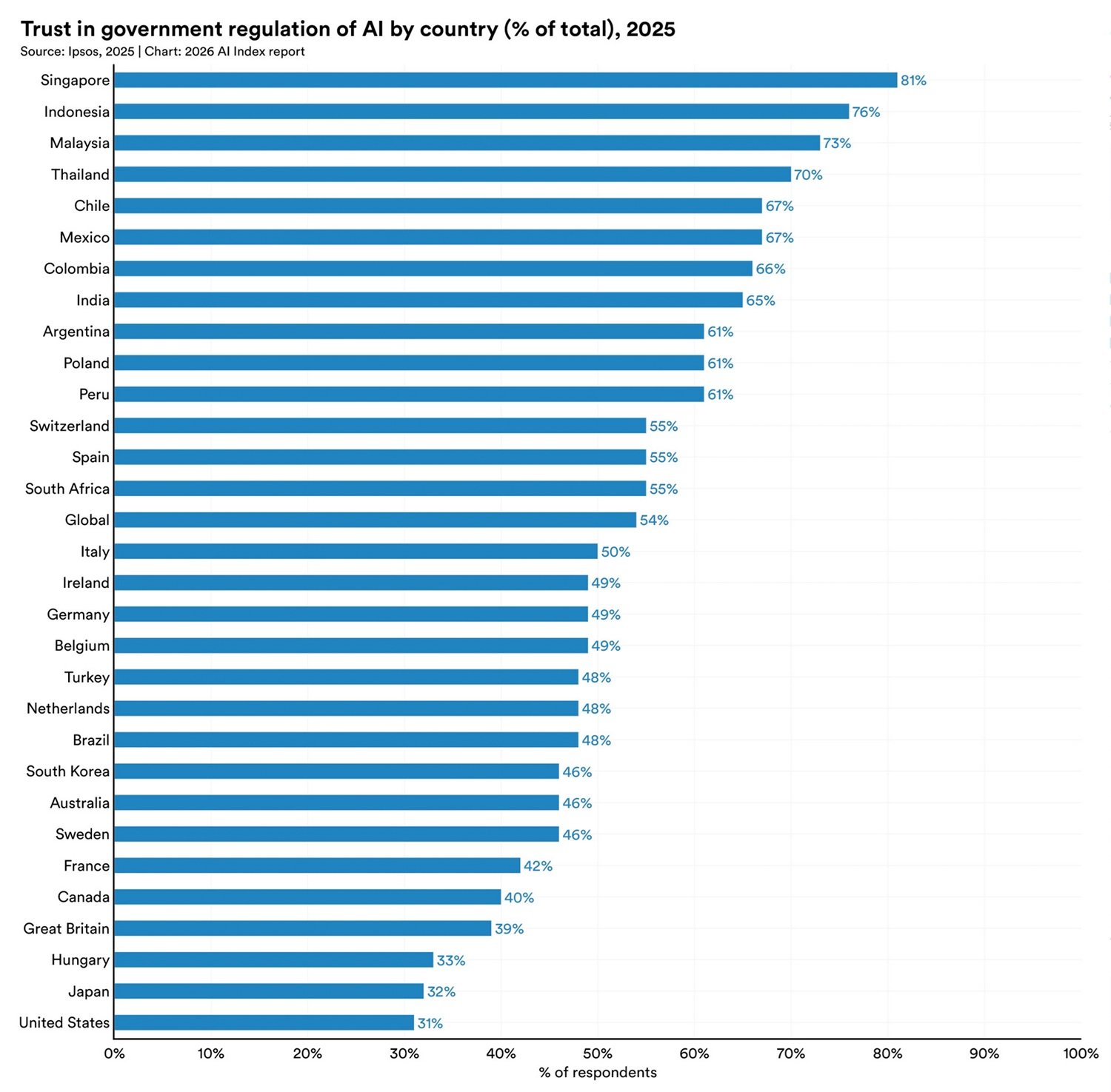

On the governance front, 2025 saw movements in opposite directions. The EU AI Act brought the first prohibitions into force. The United States shifted toward deregulation. Japan, South Korea, and Italy approved national laws. More than half of the new national strategies adopted in 2025 come from developing countries entering the regulatory debate for the first time. AI sovereignty—the ability to autonomously control one's own infrastructure and models—has become the central organizing principle for many of these strategies.

The datum on public trust is the most unsettling of the entire report. Among the countries included in the survey, the United States shows the lowest level of trust in its own government for AI regulation: 31%. The European Union is considered more reliable than the United States or China. For the country that hosts OpenAI, Google DeepMind, Anthropic, and xAI, it is a verdict that says a lot about the disconnect between industrial capacity and regulatory legitimacy. The perspective gap between experts and the public synthesizes all this: 73% of experts expect a positive impact of AI on their work, compared to only 23% of the public. A fifty-percentage-point distance on one of the central issues of our time.

AI in Science, Medicine, Education

The 2026 report introduces for the first time two standalone chapters on science and medicine. In the scientific field, frontier models outperform human chemists on average in the ChemBench benchmark, and a genomic model with 200 million parameters beat systems nearly two hundred times larger. The "bigger is better" law is cracking in specialized domains. But the same models score below 20% in replicating astrophysical experiments: the jagged frontier applies in the lab as well.

In medicine, automated documentation tools, which generate clinical notes from patient-physician interviews, saw significant adoption in 2025, with physicians reporting up to 83% time savings in drafting. The problem is the evidence: a review of over 500 clinical studies found that nearly half used exam questions instead of real patient data, and only 5% were based on authentic clinical data. The gap between promise and proof is still wide.

In education, more than 80% of students use AI for school assignments, but only half of schools have policies in place and just 6% of teachers judge them as clear. New AI doctorates in the US and Canada grew by 22% between 2022 and 2024, but that increase went entirely toward academia, not industry. More high-level training, less direct transfer to the productive sector: a paradox the report records without resolving.

Open Questions: Who Controls, Who Benefits, Who Pays

The Stanford report does not conclude with answers. It concludes with questions, and this is its most precious quality in a sector where certainties are sold at market prices.

On control: model production remains concentrated in a very few private organizations, and the best systems in the world are increasingly less transparent, with 80 of the 95 notable models of 2025 released without training code. Those who are not Google, OpenAI, Anthropic, Alibaba, or DeepSeek operate in an ecosystem that depends on decisions they do not control. On value: AI generates measurable wealth, but its distribution is highly asymmetrical—a few operators collect the revenues, junior workers are starting to pay the price of automation, and consumers receive free tools with dependencies and risks still poorly understood. On costs: the environmental footprint grows without being priced, junior roles disappear along with the training path that produces tomorrow's experts, and governance costs silently accumulate as capability benchmarks rise.

Every transformative technology redraws infrastructure, work, and power. The 2026 Stanford report documents, with data in hand, that AI is doing exactly this, faster than our systems of measurement, regulation, and adaptation can follow. The news is not that AI is improving. It is that the gap between its speed and our ability to manage it widens with every edition of the report. And this, for now, is the most jagged frontier of all.